How engineering leaders choose real time simulators for electrification labs

Simulation

02 / 22 / 2026

Key Takeaways

- Start real-time simulator selection with test cases, signal lists, and measurable pass fail metrics so the HIL platform requirements stay stable.

- Prioritize deterministic timing, end-to-end latency, and model fidelity that matches switching dynamics because closed-loop behaviour will expose weak margins fast.

- Reduce procurement risk with benchmarked pilots and clear acceptance tests, then plan scaling rules so multi-bench labs stay consistent and supportable.

Electrification labs succeed when the simulator behaves like a predictable piece of test equipment, not a research project that keeps shifting under your feet. Electric car sales reached 14 million in 2023, up about 35% year over year, and that pace forces engineering leaders to validate more variants with fewer lab hours. The practical takeaway is simple: real-time simulator selection works best when you treat timing, I/O, and model scope as system requirements, then pick the HIL platform that meets them with margin.

Procurement checklists often start with CPU cores, channel counts, and price, then hope integration will sort itself out later. That order creates rework because electrified powertrains couple fast-switching power electronics with slower mechanical and thermal dynamics, and the closed loop makes timing nonnegotiable. You’ll get better outcomes when you lock down test cases first, then map those tests to determinism, fidelity, and interfaces. The result is a purchase that stays stable as your lab grows.

Choose the real-time simulator that matches your lab’s hardest timing problem.

Start with electrification lab test cases and success metrics

Your first job is to define what must be proven in the electrification lab, then turn that into measurable pass and fail outcomes. A simulator choice becomes straightforward when every requirement traces to a test case, a signal list, and a timing budget. Success metrics should cover technical validity, operator workflow, and repeatability across benches. This approach stops feature shopping and sets clear acceptance criteria.

Electrification targets are tied to emissions outcomes, so validation needs to be defensible and repeatable. Transportation accounted for 28% of U.S. greenhouse gas emissions in 2022, and teams feel that pressure as efficiency requirements, diagnostics requirements, and safety requirements tighten. Your metrics should reflect that reality, with items like control stability under fault conditions, protection response time, and measured efficiency under defined operating points. Each metric should also state how it will be measured, including sampling, filtering, and signal scaling.

Lab leaders also need operational metrics that keep test throughput steady. Define how long model compilation can take, how fast a bench can be reset, and what “good data” looks like for automated reporting. Add constraints that teams forget until late, like hardware safety interlocks, electrical isolation, and calibration steps that techs will repeat every day. The simulator that fits your test list will fit your staffing model and your schedule.

What real-time simulator capabilities matter for electrified powertrains

The most important simulator capabilities for electrified powertrains are deterministic time steps, stable solvers for stiff systems, and I/O that matches control hardware behaviour. You need compute resources that align with both fast power electronics and slower plant dynamics. You also need signal conditioning and protection that suits high-voltage lab setups. Capability gaps show up as unstable loops, missed faults, or results that can’t be reproduced.

Start with the plant parts you must represent, then map each one to compute and I/O needs. Power electronics models stress time-step determinism and numerical stability, while motor and mechanical parts stress continuous dynamics and parameter sweeps. Battery and charging models add modes, limits, and state estimation interactions that can break timing if they are bolted on late. Grid interaction adds synchronization and disturbance injection requirements that can force new interfaces.

Then look at the features that make day-to-day testing reliable. Fault insertion should be controllable, repeatable, and logged, not improvised with wiring changes. The platform should support synchronized logging with a time base you trust, because trace alignment becomes the difference between a clear root cause and an argument. Finally, hardware modularity matters because electrification programs rarely stay the same size for long, and a fixed chassis can become a constraint quickly.

Match model fidelity and solver speed to switching dynamics

Model fidelity is only useful when it supports a decision you must make, and solver speed is only useful when it preserves closed-loop behaviour. Power electronics often tempt teams into the highest-fidelity switching models everywhere, then timing breaks and compromises happen under pressure. The better approach is to assign fidelity levels per test case and keep strict boundaries between what must be switching-accurate and what can be averaged. The simulator should let you place the right model at the right time scale.

A traction inverter control test makes the tradeoff obvious because the controller reacts to switching behaviour and dead-time effects. An inverter switching at 20 kHz pushes you toward sub-microsecond simulation steps if you want to represent device-level transitions, and that load can exceed a general-purpose CPU without hardware acceleration. You can still keep the rest of the plant at a slower step if the platform supports multirate execution with deterministic synchronization. That single choice often determines if you buy more compute, change model detail, or adjust what you validate in HIL.

Fidelity also affects how confident you’ll be when faults occur. High-detail switching models can show current spikes and protection triggers that averaged models hide, but they also increase tuning time and raise sensitivity to numerical settings. Averaged models can be the correct tool when you’re validating energy flow, thermal limits, or supervisory logic, because they keep timing stable and run repeatably. The platform should make those swaps controlled and traceable so results stay consistent across engineers and benches.

Specify I/O latency and timing for closed-loop HIL

You need a clear budget for sensor input latency, compute time, output update time, and jitter across the entire loop. Determinism matters because the controller is responding to timing patterns, not just signal values. A good requirement set describes timing in measurable terms you can test at acceptance.

Latency is rarely one number, so define it the same way you plan to measure it. Include the conversion chain, scaling, filtering, and any protocol stacks used for control communication. Jitter must be treated as a first-class parameter because a system that “usually” meets a deadline still fails in edge cases, and those edge cases tend to align with faults and transients. Clock alignment across devices matters as much as average latency when you correlate controller response to plant behaviour.

Timing specifications should also account for how the lab runs under load. Logging, visualization, and automated test execution can steal compute cycles if they share resources with the real-time task. Separate those concerns in your requirements so you can demand predictable behaviour during long runs, not just during short demos. When timing is specified clearly, vendor claims become testable, and you can reject platforms that only work in ideal settings.

Closed-loop HIL lives or dies on end-to-end timing, not on raw compute alone.

Check toolchain fit and openness across your control stack

Toolchain fit is the difference between a simulator that becomes a standard lab asset and one that stays isolated to a specialist team. You need a workflow that supports model import, code integration, test automation, and version control without fragile manual steps. Openness matters because electrification programs mix tools, teams, and suppliers, and lock-in shows up as schedule friction. The best HIL platform will let you integrate what you already use while keeping test artifacts portable.

Start with the interfaces you’ll depend on for daily work. Model exchange support should be based on common standards, and integration should not require rewriting large blocks of control code just to run in real time. APIs for automation matter because test labs increasingly run overnight regression suites, and manual clicking does not scale. Logging formats should be usable outside the simulator so analysis teams can use their preferred data tools.

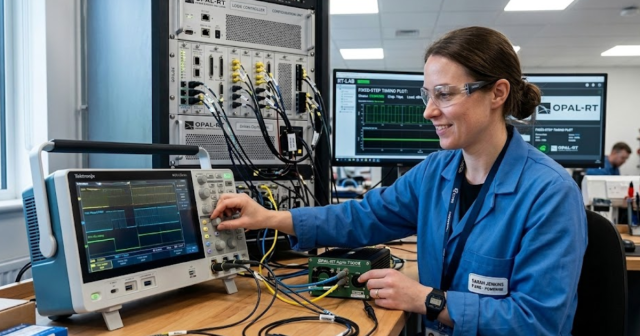

Support for customization also needs boundaries, because “open” can turn into “you own the integration forever” if the platform lacks maintained drivers and clear documentation. Teams that deploy OPAL-RT systems often focus on keeping that boundary explicit, with stable interfaces for models and I/O while reserving deep customization for the few places it adds measurable value. Your evaluation should ask what stays stable across software updates, what must be retested, and how rollback works when a lab run is on the line. Those details determine how often the simulator becomes the bottleneck instead of the system under test.

Reduce procurement risk using benchmarks pilots and acceptance criteria

Procurement risk drops when you treat simulator selection like verification, with benchmarks tied to your test cases and acceptance criteria that can’t be gamed. A pilot should reproduce your timing budget, model load, and I/O chain under conditions that resemble daily lab operation. Acceptance should include repeatability across runs, not just one successful demo. This mindset turns the purchase into a controlled handoff instead of a leap of faith.

- Measure end-to-end loop timing and jitter under full logging load

- Run your heaviest model set for hours without overruns

- Verify I/O scaling and calibration procedures are repeatable

- Confirm automated test execution works with your lab tooling

- Prove recovery steps after faults are safe and consistent

| Selection checkpoint | What you must be able to verify before purchase |

| Test-case traceability | Every simulator requirement maps to a named test and measurable metric. |

| Compute and fidelity fit | The platform holds real-time deadlines with your chosen model detail. |

| Closed-loop timing | Latency and jitter stay within budget during long automated runs. |

| Integration openness | Models, code, and data move through standard interfaces without rewrites. |

| Operational reliability | Bench reset, calibration, and fault recovery steps are documented and repeatable. |

| Scaling readiness | Adding benches does not force new architectures or inconsistent workflows. |

Benchmarks should also test the boring parts that cause delays. Include install time, configuration management, access control, and the ability to reproduce a setup from scratch when a PC fails. Ask for clear definitions of what support covers and what counts as custom engineering, because that boundary affects cost and schedule more than a spec sheet does. When acceptance criteria are written tightly, procurement becomes a technical control, not an administrative step.

Plan scaling and support needs for multi-bench labs

Scaling is a lab design problem, not a purchasing afterthought. Multi-bench operation requires consistent configuration, repeatable calibration, and a support model that keeps benches running when people rotate. You should plan how models, test scripts, and signal maps will be shared without accidental drift. The simulator that scales cleanly will reduce variance across teams and shorten troubleshooting cycles.

Operational consistency starts with configuration control that matches how your lab works. Standardize naming, channel mapping, and safety checks so a test run means the same thing on every bench. Plan for spare parts, hardware swaps, and upgrade windows, and make sure those plans do not require heroics from one engineer. Training also matters, because a tool that only experts can operate becomes a hidden capacity limit.

Leaders should judge platforms on how calmly they run at scale, not on how impressive a single bench looks in a demo. A disciplined selection process will keep the HIL platform stable while the powertrain program shifts, and that stability shows up as predictable test throughput and cleaner root-cause work. Teams using OPAL-RT often formalize that discipline with clear timing budgets, scripted acceptance tests, and shared lab standards, and the same execution habits apply no matter which simulator you choose. The lab that wins is the one that treats repeatability as a product feature you own and protect.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.