Real-Time Simulation For Cyber-Physical Systems Validation

Industry applications

02 / 15 / 2026

Key Takeaways

- Real-time simulation is the most direct way to validate cybersecurity controls against physical limits and control deadlines, so cyber outcomes are judged on system behaviour, not alerts.

- Pass or fail needs measurable criteria that link cyber actions to power performance, including stability margins, protection behaviour, and time to detect and block unsafe commands.

- Closed-loop SIL and HIL testing only holds up when your lab is deterministic, network effects are modelled honestly, and time stamps stay aligned across simulation, devices, and traffic capture.

Cyber-physical systems fail when timing, control logic, and network behaviour collide, so cybersecurity testing has to include all three in the same clock. Reported cybercrime losses reached $12.5B in 2023, and that scale of harm makes “checklist security” hard to justify for systems that can trigger outages or equipment damage. Real-time simulation gives you a repeatable lab method to see what an attack does to voltage, frequency, protection, and control stability while the attack is still unfolding. That tight coupling is the main reason it works for cyber-physical systems validation.

Offline studies and isolated network tests still matter, but they miss the closed-loop behaviour that turns a cyber event into a physical consequence. The practical goal is not to build a perfect digital replica of every component. The goal is to run the parts that shape timing and control response under the same constraints your system faces, then judge defenses using measurable power-system outcomes.

Real-time simulation lets you prove cyber defenses without guessing physical impact.

Real-time simulation links cyber events to physical response

Real-time simulation runs a power system model on a fixed clock while external controllers and networks interact with it. Each time step has a strict deadline, so control actions, communications delays, and physical dynamics stay aligned. Cyber events can be injected at the network or control interface without pausing the physics. You get a single timeline that shows cause and effect.

That single timeline matters because many cyber failures are timing failures. A command that arrives 200 milliseconds late can be harmless in an IT test, yet destabilizing in a control loop. A protection message that is replayed with the wrong sequence can look like a benign packet trace, yet it can trip equipment in a simulator that honors relay logic and breaker dynamics. Real-time simulation cybersecurity research stays grounded because it forces every defensive control to “pay the timing bill” of detection, decision, and action.

Accuracy also becomes a management choice instead of a philosophical debate. You can spend fidelity on what shapes the physical response, such as generator dynamics, inverter controls, and relay logic. You can simplify what has little effect on the security question, as long as the simplification is explicit and tested against your metrics.

Validation goals for cyber-physical systems in power operations

Cyber-physical systems validation means your system will stay within defined physical and operational limits during hostile or faulty cyber conditions. The target is not just blocking an intrusion. The target is maintaining safe control behaviour, preventing unsafe switching, and restoring stable operation after a bad command or corrupted message. Validation succeeds only when you can state pass or fail criteria.

Clear goals keep power system cybersecurity simulation from turning into endless scenario hunting. Start with outcomes that map to operations, protection, and safety, then connect each outcome to a test you can repeat. This approach also keeps teams aligned because cybersecurity staff can see the physical stakes, while power engineers can see the cyber assumptions.

- Define physical safety limits for voltage, frequency, and thermal loading.

- Set acceptable time to detect, alarm, and block unsafe commands.

- Identify protection behaviors that must not misoperate during attacks.

- Choose recovery targets such as stable reconnection and setpoint restoration.

- Specify evidence you will accept such as logs, traces, and metrics.

Once these are written down, the test scope gets easier to control. You can focus on the few pathways that can cross those limits, instead of treating every vulnerability as equally important.

“Cybersecurity results become actionable when you score them with power-system metrics, not just security alerts.”

Building a closed-loop testbed with HIL and SIL

A closed-loop testbed combines software-in-the-loop for algorithms and hardware-in-the-loop for real devices under test, all synchronized to a real-time simulator. SIL checks control code logic and interfaces early, while HIL checks timing, I/O behaviour, and device quirks that software models miss. Both are useful, but HIL is where many cyber-physical failure modes show up.

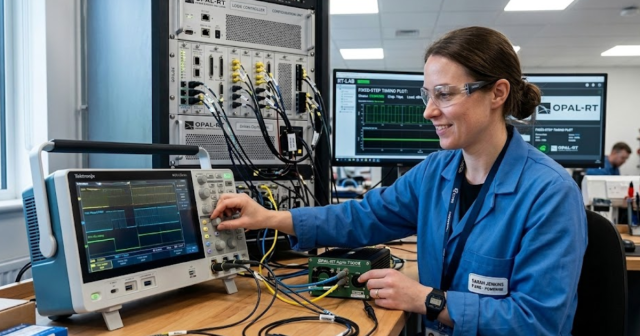

Good testbeds separate three things cleanly: the physical plant model, the control hardware and software, and the communications path where attacks occur. You also need repeatable initialization so each run starts from the same operating point, otherwise security results become hard to compare. Platforms such as OPAL-RT real-time digital simulators are commonly used here because they can run power network models in real time while interfacing with external controllers and network gear through standard I/O.

| Checkpoints that keep tests credible | What you should verify before security runs |

| Deterministic time step matches control and protection deadlines | Worst-case execution time stays below the step time |

| Network delay and loss are modelled and logged consistently | Traffic shaping rules are identical across repeated runs |

| I/O scaling and signal conditioning match device expectations | Analog and digital ranges are validated with known stimuli |

| System resets return to a known electrical operating point | Initial conditions are captured and reapplied automatically |

| Metrics capture both cyber timing and physical limit violations | Time stamps stay aligned across simulator, network, and devices |

Modelling threats and defensive controls under timing constraints

Threat modelling in real time means you inject hostile actions at the same interfaces your system trusts, then observe physical and operational effects on the same clock. Attacks can target control commands, measurement integrity, time synchronization, or protection messaging. Defensive controls also need timing realism, since filtering, anomaly detection, and interlocks must act within control deadlines. The test is only valid when both offense and defense run under the same time pressure.

A concrete way to run this is a substation protection setup where an attacker spoofs a high-priority trip message on the station network, while your defensive logic attempts to validate message sequence and source before allowing the trip to propagate. The simulator runs the feeder and breaker dynamics in real time, so you see the difference between a blocked message that preserves voltage stability and an accepted message that causes a step change in load flow. That single run produces both cyber evidence, such as packet captures and detection timing, and physical evidence, such as breaker state and voltage sag duration.

Timing constraints also create tradeoffs you have to accept openly. High-fidelity encryption stacks or deep packet inspection can be too heavy for strict control cycles, so you might prototype simpler gating logic first, then test richer analytics out of band. The value comes from measuring what your controls actually do under deadlines, not what they claim to do in a requirements document.

Measuring cybersecurity outcomes with power system performance metrics

Cybersecurity results become actionable when you score them with power-system metrics, not just security alerts. You should track stability and safety indicators, plus timing indicators that show how quickly defences react relative to the control loop. Pass or fail should be tied to limits such as voltage deviation, frequency nadir, relay misoperations, and time to block or isolate unsafe actions. Metrics turn cyber-physical systems validation into a repeatable engineering process.

Financial stakes are tied to physical continuity, so metrics should reflect that operational priority. Estimated annual outage costs to U.S. electricity customers were about $44B, and cyber-triggered disturbances are judged by the same customer impact. A defence that detects an intrusion but still allows an unstable control action is a failed defence for operations, even if the security dashboard looks good.

Metric selection works best when you separate three layers. First, set physics limits that cannot be crossed. Second, set timing limits for detection and blocking, expressed relative to your control step and protection coordination times. Third, set evidentiary requirements so you can explain results to both engineering and security teams without hand-waving.

Common testbed mistakes and how to choose tools confidently

Most failed cyber-physical tests fail for mundane reasons such as inconsistent timing, unclear scope, or missing ground truth. Unrealistic network assumptions can hide the very delays that trigger instability. Poor reset and initialization practices turn tests into one-off demonstrations. Tool choices should be judged by determinism, interface coverage, automation support, and how clearly results can be audited.

Several mistakes show up repeatedly. Teams often treat the simulator as “the plant” and ignore I/O calibration, so device behaviour looks stable until hardware is connected. Others overfit to a single attack script and then can’t explain why a small variation changes outcomes. Some labs capture packets but fail to align time stamps, which makes root cause analysis feel like guesswork. These are execution problems, not theory problems, and they are fixable with disciplined setup and measurement.

Tool selection gets easier when you ask a simple question: can your testbed prove that a defence preserves physical limits under deadlines, with evidence your peers can reproduce? OPAL-RT fits into that workflow when you need repeatable real-time execution with tight I/O coupling and lab automation, but the stronger lesson is broader. Cyber-physical validation rewards teams that treat timing, metrics, and repeatability as first-class requirements, because that discipline is what turns simulation into confidence you can defend.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.