Real-time simulation for validating autonomous and ADAS systems

Simulation

03 / 27 / 2026

Key Takeaways

- Road and track testing will not cover the long tail of safety-critical cases, so most validation needs to shift into repeatable, instrumented simulation runs.

- Deterministic real-time execution and hardware-in-the-loop testing are required to prove timing, latency, and I/O behaviour with production ECUs.

- Select ADAS simulation solutions based on closed-loop determinism, interface fidelity, scenario control, and traceable evidence, then run disciplined regressions to avoid false confidence.

Physical testing still matters, but it cannot carry the full validation load for advanced driver assistance systems and autonomous driving. The combination of rare safety-critical situations, tight release schedules, and complex sensor stacks forces teams to shift most learning into an ADAS simulation workflow that runs continuously and can be repeated on demand. A 2016 RAND analysis estimated about 11 billion miles of driving would be required to prove an automated vehicle is safer than a human driver with high statistical confidence.

“Real-time simulation will give you repeatable ADAS validation without unsafe on-road exposure.”

The practical stance is simple: on-road tests should confirm what you already understand, while deterministic real-time simulation and hardware-in-the-loop testing should find the faults, tune the controls, and stress the edge conditions early. Teams that treat an autonomous driving simulator as a primary validation tool ship with fewer late surprises, cleaner safety arguments, and tighter traceability from requirement to evidence.

Validation targets outpace what road testing can cover

Road testing cannot scale to the combination of coverage, repeatability, and observability that ADAS validation needs. You can’t schedule rare interactions on demand, you can’t replay the same centimetre-level scenario after a code change, and you can’t safely push failures to the limit. Physical fleets also blur causality because traffic, weather, and driver inputs never line up twice.

Validation targets also keep expanding beyond “works on a sunny day” checks. You’re expected to show performance across sensor variance, vehicle loading, actuator ageing, and different operational design domains, all while keeping a tight audit trail. Those expectations make the mileage problem less about kilometres and more about combinatorics, where a small set of variables creates thousands of meaningful test points.

Simulation closes the gap when you treat it as a measurement system, not a video game. You instrument every signal, record exact timestamps, and attach pass fail logic to requirements. That turns validation into an engineering loop you can run every night, rather than a calendar event that depends on track time, drivers, and weather windows.

| Test approach | What it proves well | What it struggles to prove |

| On-road fleet testing | Integration stability, comfort tuning, and long duration reliability | Repeatable edge cases and safe fault injection at the limits |

| Track testing | Controlled manoeuvres with known targets and measured distances | High variety interactions and long tail situations without heavy setup |

| Software-in-the-loop simulation | Algorithm logic checks and fast regression against recorded data | Timing, I/O jitter, and ECU specific effects |

| Real-time simulator with hardware-in-the-loop | Closed-loop timing correctness with real ECUs and real interfaces | Perception errors caused by imperfect sensor physics if models are weak |

| Vehicle-in-the-loop lab setups | System level interactions with a physical vehicle and controlled stimuli | Cost, footprint, and scaling to thousands of scenario variations |

Why ADAS and autonomy need deterministic real-time simulation

ADAS and autonomous stacks need deterministic real-time simulation because timing is part of the function. If your planner, controller, sensors, and actuators do not stay synchronized, the system can look fine in offline replay and still fail in closed-loop driving. Deterministic execution lets you trust that a change in results came from your software, not from variable compute timing.

Real time matters most once you care about feedback loops. Controller tuning depends on exact delays, perception latency affects braking distance, and scheduling jitter can destabilize handoffs between functions. An ADAS simulator that runs faster than real time can be useful for search and data generation, but you still need a deterministic loop to validate what the ECU will actually do when the vehicle is moving.

Determinism also supports credible sign-off workflows. You can pin a scenario version, a simulator configuration, and an ECU build hash, then rerun the exact same test months later when a safety case gets reviewed. That style of traceability is hard to achieve when the primary evidence comes from ad hoc road drives and hand-labelled “good run” clips.

Hardware-in-the-loop closes gaps between models and ECUs

Hardware-in-the-loop testing is the bridge between models that look correct and embedded controllers that behave correctly under timing and I/O constraints. It exposes overflow, quantization, scheduling, bus load, and watchdog behaviour that won’t appear in desktop simulation. It also forces your ADAS simulation to respect the same clocks and interfaces that the vehicle uses.

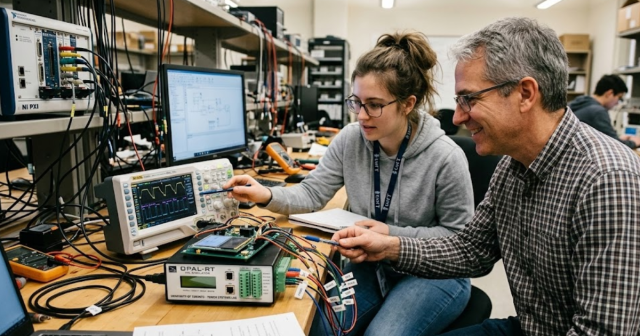

A concrete workflow looks like this: the production ECU running the braking function connects over CAN or automotive Ethernet to a real-time simulator that generates sensor feeds and vehicle dynamics, while fault injection toggles sensor dropouts and timing jitter. Engineers can then validate that the ECU still meets stopping distance and stability limits when the same scenario repeats with controlled parameter sweeps, without putting a test driver at risk.

That “closed loop with real hardware” step is where many autonomy teams find their most expensive late-stage issues. Latency budgets become visible, interface assumptions get corrected, and safety monitors get tuned using measurable triggers instead of guesswork. OPAL-RT systems are often used here because the execution model is built around deterministic real-time I/O, which is what your lab needs when ECUs must run as they do in a vehicle.

Key scenarios that simulators must reproduce for confidence

A simulator builds confidence when it stresses the system at its boundaries, not when it repeats nominal drives. You need coverage across operational design domain limits, sensor uncertainty, and interaction complexity, plus the ability to run fault injection without losing determinism. Confidence comes from showing stable behaviour under controlled variation, then confirming the same patterns during limited physical tests.

Scenario quality depends on three things: fidelity, variability, and observability. Fidelity means the sensor and vehicle models are good enough that control and perception errors show up for the right reasons. Variability means you can sweep initial conditions, actor behaviours, friction, and sensor noise in a structured way. Observability means every internal signal is logged so failures can be explained and fixed.

Human behaviour is a major driver of the situations ADAS must handle, and the statistics underline why. Human choices contribute to 94% of serious crashes. A good autonomous driving simulator will pressure-test how your system reacts to unpredictable actions while still keeping the test repeatable, measurable, and safe for your team.

How to evaluate an ADAS driving simulator stack

“It exposes overflow, quantization, scheduling, bus load, and watchdog behaviour that won’t appear in desktop simulation.”

An ADAS driving simulator stack is only as strong as its ability to run closed-loop tests, integrate your toolchain, and produce evidence you can defend. You should evaluate it like lab infrastructure, not like a demo. Focus on deterministic timing, interface coverage, scenario management, and data integrity, then confirm that your team can maintain it without heroic effort.

Start from your validation goals and work backward into requirements for compute, fidelity, and connectivity. If your target is ECU-level sign-off, the simulator must support real-time execution with the same buses and timing budgets you’ll see on the bench. If your target is perception development, the simulator must support sensor models, ground truth, and repeatable annotation pipelines that match your metrics.

- Deterministic real-time scheduling with measurable end-to-end latency

- Hardware I/O support for your buses, sensors, and actuator interfaces

- Scenario version control, parameter sweeps, and regression automation hooks

- Clear validity limits for sensor and dynamics models, documented for audits

- Data logging that ties requirements to pass fail results and traceable builds

Common ways teams misuse ADAS simulation and miss issues

Teams miss issues when they treat simulation as a showcase, not a disciplined test system. Shiny visuals can hide weak observability, non-repeatable runs, and untracked configuration drift. Simulation only helps when you can reproduce failures, attribute root cause, and prove that a fix holds across controlled variation.

One common failure mode is mixing validation goals with mismatched fidelity. High-level planning tests run on ideal sensors can pass while the embedded controller fails once timing jitter and interface quirks appear. Another is scenario sprawl, where teams accumulate thousands of cases without a coverage model, so gaps persist even as test counts rise.

The strongest teams keep a tight loop between requirements, scenario design, and evidence quality, then reserve limited on-road driving for confirmation and calibration. That execution style is what OPAL-RT aims to support in practice: deterministic real-time simulation that fits into a lab workflow, produces repeatable results, and keeps the focus on proving system behaviour rather than collecting kilometres.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.