Validating multiphase motor controllers before hardware deployment

Automotive

02 / 12 / 2026

Key Takeaways

- Set numeric control and protection targets first, then tie every test to a pass or fail result.

- Match the plant model, sensors, and timing to your motor and inverter so software results carry into closed-loop HIL.

- Use repeatable HIL sequences for transients and faults, then lock fixes with regression tests before first power-up.

That approach will catch control and protection failures while they’re still cheap to fix, and it will make bench bring-up calmer and faster. Inadequate software testing was estimated to cost the U.S. economy $59.5 billion each year, largely from rework and downstream failures. Motor control teams feel the same pattern when validation starts too late and too close to first power-up.

Multiphase controllers raise the bar because you’re managing more switching states, more current sensors, more fault cases, and more ways for timing to go wrong. A good validation plan is not “test more,” it’s “test the right things earlier with measurable targets.” You’ll get the most value when software-in-the-loop and HIL testing share the same goals, the same pass-fail metrics, and the same path to debugging.

“Validate a multiphase motor controller in closed loop before any high-power hardware runs.”

Define validation goals for multiphase motor controller performance

Validation goals must translate system intent into numbers your tests can accept or reject. You’re proving torque control quality, current limits, and fault handling across speed and load. You’re also proving stability when phase coupling, deadtime, and sampling delays interact. Clear goals stop late-stage debates and keep multiphase motor controller validation focused.

Start with what will break hardware or customer trust first, then add refinement targets. Protection goals usually come first: peak phase current, dc bus overvoltage, inverter temperature, and safe responses to sensor dropouts. Next come control goals: torque step response, speed regulation, current sharing between phases, and harmonic content in phase currents and torque. Add coverage targets that matter to your program, such as operation under low dc voltage, weak field, or sensorless mode.

Goals also need to reflect production scale, because small failure rates become large field counts. Electric car sales exceeded 14 million in 2023, which pushes more traction systems into higher-volume production and tighter quality gates. That scale rewards teams that treat validation targets as contractual requirements, not best-effort tuning notes.

Build a plant model that matches your motor and inverter

A plant model is your stand-in for the power stage and machine, and it must be accurate enough that controller behaviour carries over to hardware. That means the motor electrical model, mechanical load, inverter nonidealities, and sensors must match what your code will see. A good model supports both nominal performance checks and fault coverage without hiding critical dynamics.

Motor fidelity matters most around the bandwidth where your controller acts. Include phase-to-phase coupling, saliency if it affects your estimator, and saturation if current limits and field weakening are part of your operating region. Inverter fidelity matters when switching behaviour shapes current control, so include deadtime, device voltage drops, PWM update timing, and any filter networks. Sensor models should include quantization, offsets, noise shaping, and sampling phase relative to PWM.

Model confidence comes from traceable parameter sources and repeatable calibration, not from a visually “good” waveform. Tie parameters to test reports, datasheets, or identification results, then lock versions so results are comparable across sprints. When your control team and test team share the same plant assumptions, failures become actionable rather than arguable.

Choose SIL or HIL tests based on risk and timing

The main difference between SIL and HIL is what runs in real time and what risks you can expose safely. SIL runs controller code against a simulated plant on a desktop, so iteration is fast and coverage can be broad. HIL runs the controller on its target compute with a real-time simulator, so timing, I/O behaviour, and integration risks show up early. The best choice is the one that matches your next failure mode.

| Checkpoint you can prove without high-power hardware | SIL fit | HIL fit |

| Control stability across speed and torque operating ranges | Strong for rapid sweeps and tuning iteration | Strong when timing and quantization affect stability margins |

| Protection logic, behaviour, and fault state sequencing | Strong for exhaustive fault trees and corner cases | Strong when faults depend on I/O timing and interrupt latency |

| PWM and sampling synchronization effects on current control | Moderate when models approximate timing | Strong because real execution jitter and update rates appear |

| Interface risks across ADCs, encoders, resolvers, and comms | Weak because peripherals are typically mocked | Strong because the controller sees realistic signals and protocols |

| Regression testing at scale across software revisions | Strong due to speed and automation | Strong for high-risk scenarios that must reflect target hardware timing |

Sequencing matters. SIL should prove algorithm intent and logic completeness before you spend time on wiring, pin maps, and real-time step sizes. HIL then becomes a confirmation stage for timing, interfaces, and controller resilience, rather than a first pass at basic correctness. If you reverse that order, you’ll waste lab time debugging issues a desktop test could have found in minutes.

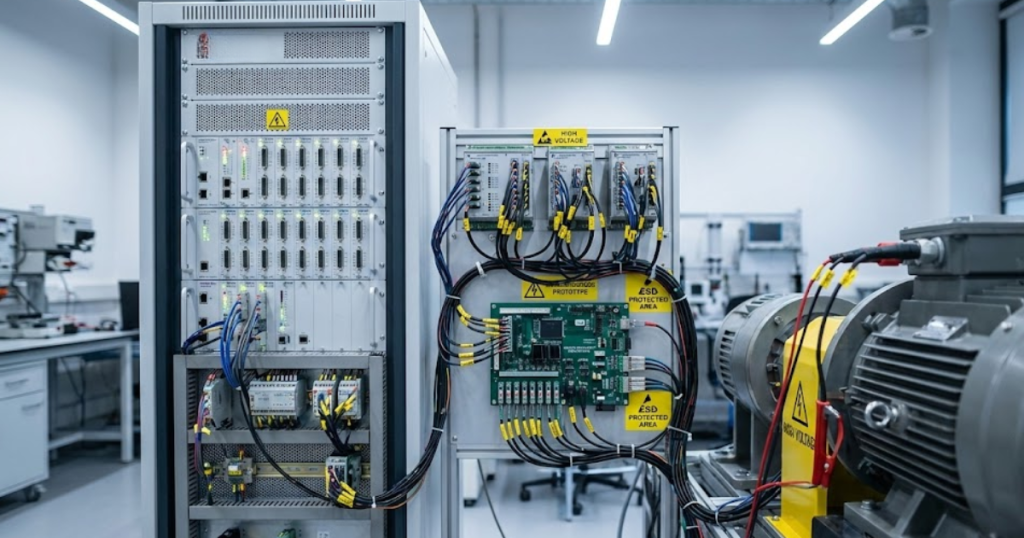

Set up HIL interfaces and timing for stable closed loop

HIL testing only works when the controller and simulator behave like a coherent closed loop at the correct rates. Your first task is to align time steps, I/O latencies, and signal conditioning so the controller sees realistic phase currents, voltages, and position signals. Stable closed loop on HIL is a timing discipline problem as much as a modelling problem. Getting it right makes later faults and transients trustworthy.

Start with the control loop cadence and work outward. Lock PWM frequency, ADC sampling instants, and interrupt priorities, then choose a real-time simulation step size that keeps numerical delay small relative to those events. Match scaling and units end to end, then verify sign conventions for dq transforms and phase ordering, because multiphase systems multiply opportunities for swapped channels. Add interface tests that prove saturation behaviour, clipping, and fault flags without involving complex torque commands.

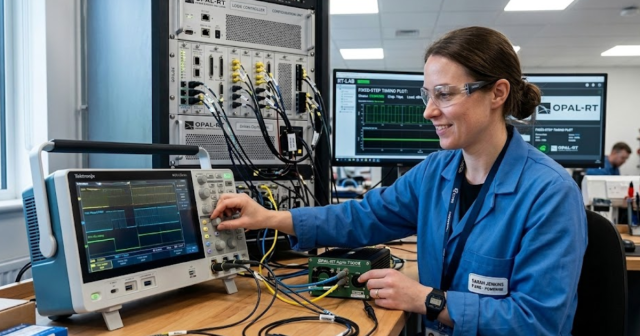

Tool choice affects how cleanly you can execute that setup, especially when you need high channel counts and deterministic timing. Many teams use OPAL-RT real-time simulators for multiphase power electronics because they can run detailed plant models while providing low-latency I/O paths for controller hardware. Focus on repeatable wiring, traceable configuration control, and automated sanity checks, since those prevent “mystery” regressions later.

Run test sequences that cover transient limits and faults

Test sequences must stress the controller in the same order risk emerges during hardware bring-up. Start with nominal operating points, then push transients that hit current and voltage limits, then inject faults that force protective actions. Each sequence should end with an explicit pass-fail result tied to your goals, not a subjective waveform review. HIL testing is most valuable when it forces your controller to choose safe behaviour under pressure.

- Speed and torque steps that verify loop stability and tracking error

- Current limit events that prove clamp behaviour and recovery dynamics

- DC bus disturbances that stress modulation and overvoltage handling

- Sensor faults that validate estimator fallback and safe-state logic

- Phase and switch faults that verify isolation and torque derating rules

One concrete example is injecting an open-phase fault on a six-phase traction controller while commanding a torque step, then verifying that current redistributes within limits and torque derates as specified within a fixed time budget. That single scenario forces coordination across current regulators, fault detection, state machines, and limiters. It also exposes timing bugs that hide during steady-state checks, especially if your fault logic depends on sample alignment.

“HIL testing only works when the controller and simulator behave like a coherent closed loop at the correct rates.”

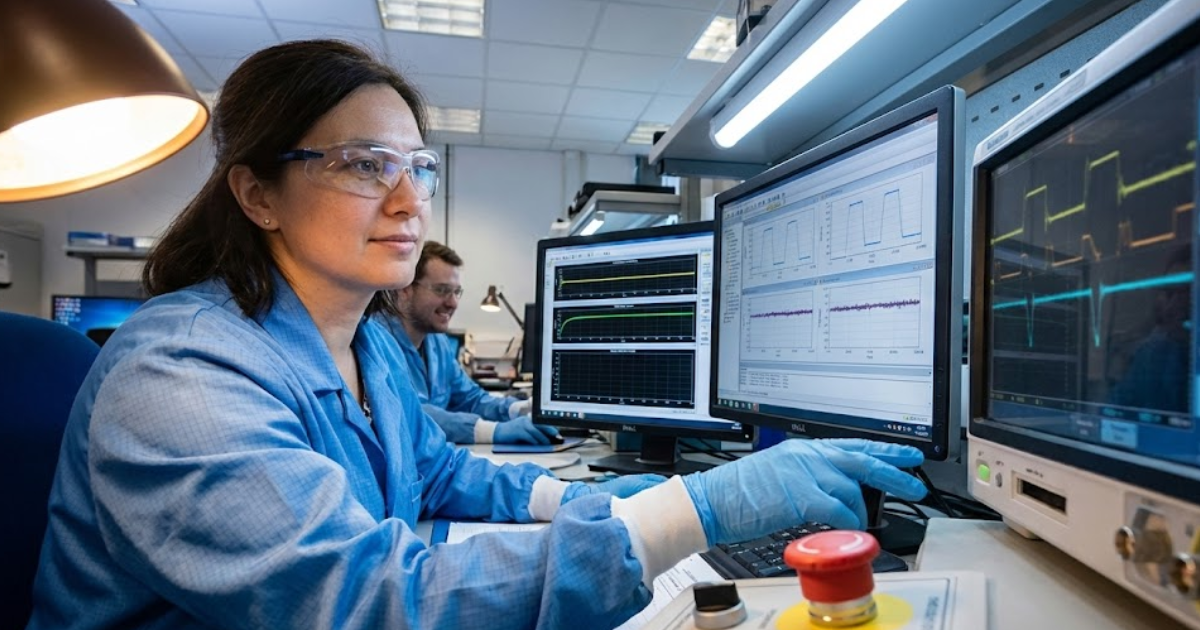

Use pass fail metrics and debug loops before hardware bring up

Pass-fail metrics turn controller validation from a waveform review into a repeatable gate. You’ll need thresholds, time windows, and tolerances for each goal, plus a standard method to capture traces and classify failures. Debug loops then become fast because you can reproduce the same test, isolate the signal that violated the metric, and verify the fix without reinterpreting intent. That discipline is what makes pre-hardware validation pay off.

Define metrics that match how failures actually show up. Use time-to-limit, overshoot, and settling windows for torque and speed, and use absolute bounds with duration for currents and voltages. Track fault coverage with explicit state transition assertions, not just “fault happened,” so you know the controller took the correct path. Add regression triggers so a passing build stays passing after unrelated code edits.

Hardware bring-up still matters, but it should feel like confirmation, not discovery. Teams that adopt this style of multiphase motor controller validation show up at first power with known limits, known fault responses, and a known trace vocabulary, which reduces debate under pressure. OPAL-RT fits naturally into that workflow when you treat HIL as a repeatable gate and keep the model, tests, and metrics under the same change control as the controller code.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.