Fault tolerant control workflows for multiphase electric drives

Power Electronics

02 / 24 / 2026

Key Takeaways

- Fault tolerant control in multiphase drives will work only when detection, isolation, reconfiguration, and derating are designed as one workflow with testable acceptance criteria.

- Redundancy is useful only after confident isolation, since false trips and unsafe resets often cause more downtime and stress than the original fault.

- Hardware-in-the-loop validation turns protection timing and degraded-mode limits into repeatable, auditable behavior you can trust during failures.

Fault-tolerant control for multiphase drives works only when you treat it as an end-to-end workflow.

Multiphase motor drives give you extra degrees of freedom, but redundancy does not manage itself. Control software must detect abnormal behaviour fast, isolate the right component without guesswork, and reconfigure current commands while staying inside thermal and voltage limits. Electric motors and motor-driven systems account for about 46% of global electricity use, so a drive that degrades gracefully protects uptime, energy use, and hardware.

The most reliable teams treat faults as a normal operating mode with clear rules, not as a rare exception handled with last-minute patches.

That stance shapes everything that follows, from sensor selection to how you gate torque commands, log events, and validate protection behaviour. When those pieces are built as one workflow, multiphase hardware becomes a safety margin you can actually use.

Fault tolerant control goals for multiphase motor drive systems

Fault tolerant control aims to keep a multiphase drive stable and predictable after a fault, while limiting damage and keeping people safe. You want controlled torque, bounded currents, and known thermal behaviour. You also want repeatable transitions between normal and degraded modes. A workable design sets measurable acceptance criteria before coding starts.

Start with goals that can be tested, not slogans. Torque ripple, peak phase current, DC bus overvoltage, and inverter junction temperature all translate into pass fail checks. A second set of goals addresses system behaviour, such as how long torque can be sustained after a phase loss and how quickly the drive must reduce current after a short circuit is suspected. Clear targets prevent teams from tuning protections until “it feels right.”

Multiphase drives add options, but they also add ways to get into trouble. Extra phases can keep torque up, yet they can also hide faults if detection logic is weak. A strong workflow treats redundancy as conditional, meaning you only use it after isolation is confident and the controller has shifted to a mode with known limits.

Fault classes in multiphase inverters and machine windings

Faults in multiphase electric drives fall into a small set of classes that determine the right control response. Power stage faults include switch open and switch short behaviours. Machine faults include phase open circuits, phase-to-phase shorts, and insulation breakdown patterns. Sensor and estimator faults often look like plant faults, so they require special handling.

Classification matters because protection actions are not interchangeable. A suspected short circuit requires immediate current limiting and gate blocking logic, while an open phase can often be managed with control reallocation. Sensor faults sit in the middle, since a single bad current sensor can trigger incorrect isolation and unnecessary derating. Good designs build a map that ties each class to detection signals, confirmation rules, and the allowed control modes afterward.

Prioritization should follow risk, not convenience. Electrical faults that can escalate within microseconds receive the fastest response paths, often implemented in firmware or dedicated logic. Faults that develop over longer times, such as winding degradation, still matter because they can bias estimators and push thermal margins. When you treat these as separate classes, you avoid a one-size shutdown strategy that wastes the benefits of multiphase drives.

Signal choices and thresholds for fast fault detection

Fast detection relies on signals that react early, stay observable in degraded modes, and remain stable across operating points. Phase currents, DC link voltage, and switching node behaviour typically carry the earliest signatures. Model residuals can add sensitivity when sensors remain trustworthy. A good threshold strategy balances speed with false trip risk.

Detection works best when you limit your first layer to signals you can trust under noise and transients. These five signal types usually provide strong coverage without forcing heavy computation:

- Phase current magnitude and asymmetry checks tied to the commanded current

- Negative sequence or harmonic indicators derived from measured currents

- DC link voltage rate of change during switching events

- Gate command and desaturation style feedback from the power stage

- Residual errors between measured and estimated electrical states

Thresholds should adapt to operating state, because start-up and regeneration look different than steady torque. Use multi-stage logic, where a fast limit reduces current first, then a slower confirmation step decides isolation and mode switching. Filters help, but long windows hide fast faults, so keep time constants tied to electrical frequency and switching rate. The goal is a clean alarm that you can trust when it triggers.

Fault isolation methods that avoid false trips and resets

Fault isolation identifies what failed and where, so the controller can pick the correct degraded mode instead of guessing. Robust isolation uses redundant evidence, not a single threshold crossing. It also avoids aggressive auto-reset behaviour that can cause repeated stress. Isolation logic should produce a clear, logged decision you can replay in testing.

Design isolation as a sequence of checks that narrow the possibilities, then confirm with a second signal family. Separate “suspected fault” from “confirmed fault,” and tie each state to allowed actions. This prevents a brief measurement glitch from forcing a full mode switch. It also helps operators and test engineers understand why the drive reduced torque when nothing visibly broke.

Fault tolerant control is not a single algorithm, it is a set of tested behaviors that you can defend under pressure.

| Workflow checkpoint | What you must verify | What goes wrong when it is skipped |

| Suspected fault latch | A fast flag reduces stress while data is collected | Peak current can rise before protection reacts |

| Cross-signal confirmation | Two independent measurements support the same fault class | False trips spike and operators lose trust in protections |

| Location decision | The logic distinguishes inverter leg from machine phase behavior | Reconfiguration uses the wrong constraints and destabilizes control |

| Reset policy | Reset requires stable signals and a cooldown condition | Fault chatter causes repeated thermal and electrical stress |

| Event logging | Key waveforms and states are saved with timestamps | Root cause analysis turns into guesswork |

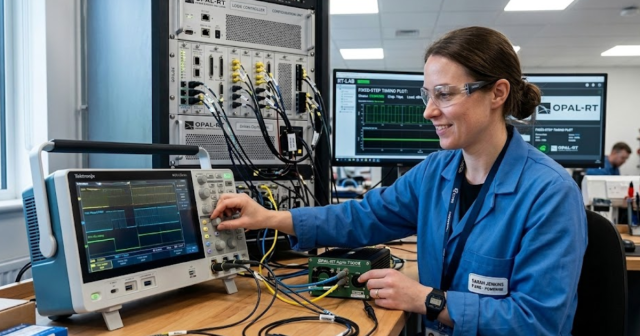

Isolation rules are easier to validate when you can replay edge cases at will. A real-time digital simulator from OPAL-RT can run fault injections against your exact control code and protection timing, which helps you tune confirmation windows without risking hardware. That kind of repeatability matters more than perfect plant fidelity during early isolation design. The practical goal is simple, the drive should do the same thing every time the same fault occurs.

Control reconfiguration to keep torque under phase loss faults

Control reconfiguration changes current references, modulation rules, and constraints after isolation so the drive stays stable with fewer effective phases. The controller must preserve torque production while limiting copper losses and inverter stress. A clean approach switches to a defined degraded controller, not a patched set of gains. Multiphase systems can redistribute current, but only within known limits.

A six-phase permanent magnet synchronous motor can illustrate the difference between “fault tolerant” and “fault stable.” One phase open circuit can be isolated, then the torque-producing current reference can be projected into the remaining healthy subspace so the average torque remains controlled. Current limits must tighten because remaining phases carry higher RMS current, and the modulation strategy must avoid pushing one leg toward saturation. That workflow keeps the machine controllable instead of simply keeping it spinning.

Reconfiguration has tradeoffs that you should accept upfront. Torque ripple typically rises, which can excite mechanical resonances and raise acoustic noise. Efficiency drops as copper loss climbs, which tightens thermal margins and shortens allowable duration at high torque. A good design exposes these limits to higher-level control, so the system reduces torque on purpose rather than after temperatures spike.

Derating rules and limits for safe degraded operation

Derating defines how much torque, speed, and duty cycle you will allow after a fault so the drive remains safe and stable. These limits should be deterministic and tied to measurable quantities such as winding temperature, inverter temperature, and DC bus conditions. Derating also needs a clear exit condition, since running forever in degraded mode can hide a developing failure. The goal is controlled capability, not maximum capability.

Derating should be built from constraints that already exist in your design. Thermal models and temperature sensors set continuous current limits, while voltage headroom and modulation limits set high-speed capability. Motor systems consume about 70% of electricity used in U.S. manufacturing, so a derating policy that avoids unnecessary losses can reduce operating cost even during faults. The key is to make derating a rule set that everyone can predict and test.

Operators and system software need simple, explicit outputs from the derating logic. Provide an allowed torque limit, an allowed speed limit, and a maximum time at that operating point if thermal headroom is shrinking. Tie reset and recovery to verified stability, not a timer alone, so you do not reapply full torque into a still-faulted leg. A disciplined derating strategy turns degraded operation into a managed state instead of an emergency.

Validation workflow using real-time models and hardware in the loop tests

Validation proves that detection, isolation, reconfiguration, and derating behave correctly across operating points and fault timing. Software-only tests will not capture timing, quantization, and I/O delays that shape protection behaviour. Hardware in the loop testing closes that gap using a real-time plant model linked to your control hardware. The result should be traceable evidence that protections act as designed.

Validation should start with fault injection plans that match your fault classes and acceptance criteria. Sweep speed, load, and DC bus conditions, then inject faults at multiple electrical angles to catch worst-case stress. Record the time from fault onset to current limiting, the correctness of isolation, and the stability of the degraded controller. Treat every unexpected reset or nuisance trip as a test failure, since operators will see it as lost reliability.

Long-term reliability comes from repeating this workflow until protection behaviour becomes boring, because boring means predictable. OPAL-RT systems are often used to run these closed-loop tests in real time with repeatable fault injection and timing checks, which helps teams lock down corner cases before hardware exposure. That discipline is what turns multiphase redundancy into an operational capability you can count on. Fault tolerant control is not a single algorithm; it is a set of tested behaviours that you can defend under pressure.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.