Real-time simulation for validating automotive ECUs in modern vehicle programs

Simulation

03 / 20 / 2026

Key Takeaways

- Real-time simulation makes timing, load, and fault behaviour testable early, so automotive ECU validation issues surface before vehicle integration.

- Hardware-in-the-loop testing provides the most credible proof of ECU performance because it exercises production firmware, peripherals, I/O latency, and network stacks under controlled stress.

- Scalable automotive ECU testing relies on deterministic timing, automated regression, strict configuration control, and lab checks aligned with the Open Alliance Automotive Ethernet ECU test specification.

Modern vehicle programmes fail late when timing, network load, and fault handling get validated only after hardware is available and integrated. A single platform can include up to 100 electronic control units, which turns “works on my bench” into a risky assumption. When schedules tighten, teams often accept gaps in coverage that show up as unstable behaviour, intermittent faults, or rework across suppliers.

Real-time simulation software earns its place when you treat time as a requirement, not a detail. The practical claim is simple: ECU validation gets faster and more credible when your tests run with deterministic timing, closed-loop plant models, and repeatable fault injection before full vehicle integration. Hardware-in-the-loop testing becomes the control point where you can prove an automotive ecu will behave correctly under load, latency, and degraded conditions.

“Real-time simulation software earns its place when you treat time as a requirement, not a detail.”

Validation pain points that slow modern automotive ECU programs

Automotive ECU validation slows down when the hardest failures depend on timing, concurrency, and integration details you can’t reproduce on a desktop. You’re validating not only logic, but also scheduling, network contention, I/O timing, and safety reactions. Late hardware availability and supplier black boxes push these checks to the end, when fixes cost the most.

Timing issues get masked when a test setup runs “about right” but not deterministically. Add multiple software tasks, interrupts, and network traffic, then a fault that should be caught in 20 ms shows up at 80 ms, or not at all. Code size also raises the probability of integration defects, since a modern vehicle can contain around 100 million lines of code. More code means more interactions that only appear under specific load patterns.

Validation also slows when test evidence can’t be reused. If your tests depend on manual steps, ad hoc wiring, or lab-only access to specialised gear, regression becomes optional, not routine. That gap forces teams into last-minute triage instead of steady verification, and it often shows up as late changes to calibration, network settings, or diagnostic behaviour.

How real-time simulation closes gaps in ECU test coverage

Real-time simulators improve automotive ecu testing when they execute plant models and I/O with fixed, repeatable time steps, so your ECU sees the same timing every run. That lets you test control stability, fault reactions, and network behaviour under stress without waiting for a full vehicle build. Coverage improves because scenarios become repeatable, automatable, and safe to run at scale.

Determinism is the key difference from offline simulation. You can sweep sensor conditions, inject electrical faults, and impose network delay while keeping the rest of the system stable. You also get traceable evidence, since the same stimuli can be replayed for regression after software updates or supplier changes.

| Test gap you need to close | What a real-time simulator gives you |

| Timing jitter hides deadline misses | Fixed step execution with known latency |

| Rare faults are hard to reproduce | Repeatable fault injection and replay |

| Limited hardware blocks early testing | Plant and network models replace missing rigs |

| Manual testing breaks regression | Automated scenarios with consistent I/O |

| Integration bugs appear under load | Controlled load and delay profiles |

Why hardware-in-the-loop testing is central for ECU development

Hardware-in-the-loop testing is used for automotive ECU development because it validates the actual ECU hardware and embedded software against a simulated vehicle plant in real time. That combination checks the full control loop, including ADC and PWM timing, I/O conditioning, communication stacks, and CPU scheduling. You get confidence that behaviour holds under the same timing constraints the vehicle will impose.

HIL matters most when failures are timing-sensitive or safety-relevant. Desktop tests can validate algorithms, but they can’t fully prove interrupt load, peripheral behaviour, startup sequences, sleep states, or watchdog interactions. HIL also supports negative testing, where you inject faults that would be unsafe or costly to force in a prototype vehicle, such as sensor dropouts or short-to-battery conditions on an input.

Tradeoffs still matter. Model fidelity, I/O latency, and sensor modelling limits can create false confidence if your assumptions are weak. HIL works best as a disciplined practice with clear acceptance criteria for timing, signal quality, and traceability from requirements to test evidence, not as a single “final gate” run at the end.

“Disciplined execution will beat heroic debugging every time.”

Building a repeatable ECU validation workflow from model to HIL

A repeatable workflow links requirements to test cases that run first in offline simulation, then in real time, then in HIL with the production ECU. Each step should reuse the same stimuli and pass-fail criteria, while adding the next layer of timing and hardware realism. The payoff is steady regression that catches integration faults early and prevents “lab-only” knowledge from becoming a bottleneck.

A concrete workflow can look like this: a chassis domain automotive ecu is validated against a real-time vehicle dynamics model, then moved to HIL where the ECU runs its production firmware, reads simulated wheel-speed sensors through real I/O, and exchanges automotive ethernet traffic with a simulated gateway. The same test sequence then forces a network delay and a sensor dropout to confirm torque reduction and diagnostic logging happen within defined time limits. That single scenario becomes a reusable regression test after every software build.

Repeatability depends on configuration control. Version your models, your test scripts, your I/O mappings, and your network settings so reruns mean something. Treat timing budgets as first-class artefacts, with clear limits for loop time, sensor-to-actuator latency, and network delivery, since those are often where late failures hide.

Applying Open Alliance Automotive Ethernet ECU test specification in labs

Applying the open alliance automotive ethernet ecu test specification in labs means validating link behaviour and network robustness in a controlled, repeatable setup, not only checking that packets pass. You’re proving interoperability, startup behaviour, error handling, and tolerance to noise and delay. Done well, these tests prevent integration stalls when ECUs, switches, and sensors come from different suppliers.

Start with conformance-style checks that confirm the ECU establishes link reliably across power cycles, cable lengths, and operating modes. Move next to robustness checks that stress the network, since packet timing and burstiness can influence control behaviour even when average bandwidth looks fine. Keep diagnostics in scope, because engineers need clear fault codes and timestamps when link or frame errors happen under stress.

Real-time simulation adds value when you combine Ethernet traffic with plant dynamics and ECU timing. Network tests stay meaningful when the ECU is also executing its control loop, handling interrupts, and reacting to sensor changes, rather than sitting idle while a traffic generator floods frames. That combined load is where you’ll see queueing, missed deadlines, or recovery logic that doesn’t meet your safety goals.

Selecting automotive ECU testing tools and equipment for scalable labs

Selecting automotive ECU testing tools should start with what must be deterministic, what must be measurable, and what must be automated. You need predictable timing, traceable signal paths, and a repeatable way to run regression across software releases. Lab scale comes from standardised setups, not from one-off rigs that only a few specialists can operate.

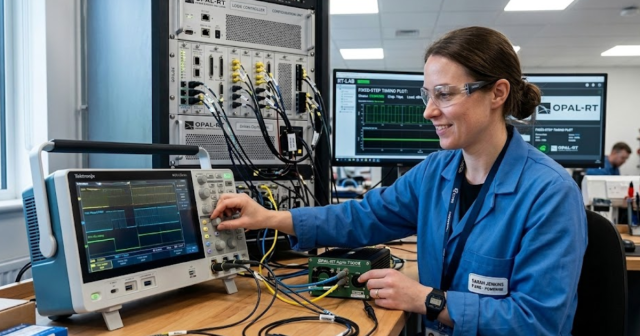

Start your evaluation with integration and upkeep, not just performance claims. The right automotive ecu testing equipment provider will support long-lived test benches with stable drivers, calibration routines, and clear update paths for firmware and test scripts. OPAL-RT is often used in this context when teams need a real-time simulator that integrates tightly with HIL I/O and automation, while staying flexible as ECU scope and network content grow.

- Deterministic loop timing with measurable end-to-end latency

- I/O coverage for sensors, actuators, and fault insertion needs

- Network tools that capture timestamps and error counters

- Automation hooks for unattended regression and reporting

- Configuration control for models, wiring, and test artefacts

Common setup errors that reduce confidence in ECU test results

Confidence drops when test results depend on hidden timing variation, unclear signal paths, or untracked configuration changes. The most common failure mode is mistaking “pass once” for “pass reliably,” especially when the test bench can’t reproduce the same timing across runs. Another frequent issue is letting wiring and scaling live in tribal knowledge instead of controlled documentation.

Start by treating time and calibration as measurable artefacts. Validate sampling rates, anti-alias filtering assumptions, and actuator delays, then record them with the test evidence. Keep network capture and ECU logs time-synchronised, since mismatched timestamps make root cause analysis feel like guesswork. Avoid silent model changes, since plant models that shift without version control will create false trends that waste engineering time.

Disciplined execution will beat heroic debugging every time. When you standardise timing budgets, configuration control, and regression criteria, you stop arguing about the bench and start learning about the ECU. That’s the practical reason teams stick with real-time simulation and HIL once they’ve invested in it, and why OPAL-RT setups are typically managed like critical lab infrastructure rather than ad hoc tooling.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.