Key Takeaways

- Shorter firmware testing cycles come from measuring end-to-end lead time, then fixing the single biggest wait state before adding more tests.

- A clear test pyramid keeps fast checks close to developers and reserves hardware-heavy validation for risks that only timing and I/O can prove.

- Hardware-in-the-loop pays off when it runs unattended with stable resets, versioned models, and deterministic results you can trust.

Every embedded team knows the drag of long waits for boards, lab slots, and test results that arrive after context has already switched. The cost of slow feedback is not just engineering frustration, because escaped defects turn into schedule slips, rework, and support load. Inadequate software testing infrastructure was estimated to cost the U.S. economy $59.5 billion per year. Faster firmware testing is mainly a workflow problem, so the fix starts with how tests are staged, triggered, and trusted.

The most reliable path to speed is a disciplined split between quick checks that run constantly and slower validation that runs only when it pays back. You shorten loops by pushing more defects into cheap, repeatable checks, while keeping hardware work focused on what only hardware can prove. Embedded software testing tools matter, but tool choice only helps after you set standards for test boundaries, deterministic results, and ownership of failures. The sections below focus on where time really goes, what to change first, and how to decide when hardware-in-the-loop belongs in your firmware testing plan.

“Short firmware testing cycles come from tighter feedback loops, not more testing.”

Find the biggest time sinks in firmware testing cycles

Most firmware testing delays come from waiting, not running tests. Lab contention, manual flashing, slow triage, and flaky test behaviour stretch a simple code change into a multi-hour loop. When you measure end-to-end lead time from commit to a trusted pass or fail, the worst bottleneck is usually obvious. Fixing that single constraint often beats adding more tests.

Start by tracking three timestamps for a week: when a change is ready for test, when it starts running, and when a developer reads a clear result. The gap between “ready” and “running” points to queueing, hardware access, or manual steps. The gap between “running” and “understood” points to poor logs, unclear ownership, or missing artefacts such as firmware builds and configuration files. You’ll also surface hidden work like re-running tests after power cycles or swapping cables after a bench reset.

Once you can name the delay, you can treat it like a production constraint. Shared lab gear needs booking rules, reset automation, and a standard way to restore known-good states. Manual steps need scripts and versioned config, not tribal knowledge. Flakiness needs a hard policy, because a fast test that can’t be trusted will still slow you down in triage and debate.

Set a test pyramid for embedded software and firmware

A practical test pyramid for embedded development puts most checks where feedback is fastest and cheapest, then reserves hardware-heavy validation for risks that matter. Firmware unit tests and component checks should run frequently and finish quickly. Integration tests should validate module boundaries, timing assumptions, and error handling. Full embedded system testing on hardware should prove I/O, electrical behaviour, and closed-loop control, because those costs are highest.

A useful way to set the pyramid is to write down what each layer must prove and what it must never try to prove. Low-level tests should validate pure logic, state machines, and safety checks without needing sensors or actuators. Mid-level tests should validate driver interactions, scheduling behaviour, and protocol framing using controlled stubs or simulation models. Top-level tests should focus on end-to-end safety cases, fault responses, and performance under realistic timing, because these are the items that fail quietly until hardware is involved.

The tradeoff is coverage versus confidence. Shifting checks earlier will always reduce cost per run, but only if you keep interfaces honest so early tests do not drift away from the product. That means clear separation between hardware access layers and business logic, plus a versioned contract for data formats and timing. When the pyramid is explicit, you can say “no” to slow tests at the bottom and “yes” to fewer, sharper tests at the top.

Automate build, flash, and test to shorten feedback loops

Automation shortens firmware testing loops when it removes human waiting and produces repeatable results, not when it simply runs more steps. A good pipeline compiles, packages, flashes, runs targeted tests, and captures logs in a single push-button flow. The best pipelines also record the exact firmware, configuration, and test assets that produced a failure. That traceability turns triage from a meeting into a fix.

The quickest wins usually come from standardizing how devices are prepared and reset. A board should be flashable and recoverable without special knowledge, even after a test crashes or a watchdog fires. Test logs should be machine-readable and include the firmware identifier, feature flags, and I/O configuration so failures can be replayed. When a failure appears, the first response should be a scripted rerun with the same artefacts, not a manual re-creation attempt.

- One command that builds and packages the exact testable firmware image

- Automated flashing with a verified readback or checksum step

- Hardware reset control that returns the device to a known state

- Log capture that stores artefacts and timing data per test run

- Clear pass fail rules that treat flaky tests as defects

Automation also forces useful discipline. If a test can’t run unattended, it’s often too coupled to a person’s judgement, a bench quirk, or an undocumented cable path. Tightening that up will reduce false alarms and make your embedded software testing program easier to scale across teams and sites.

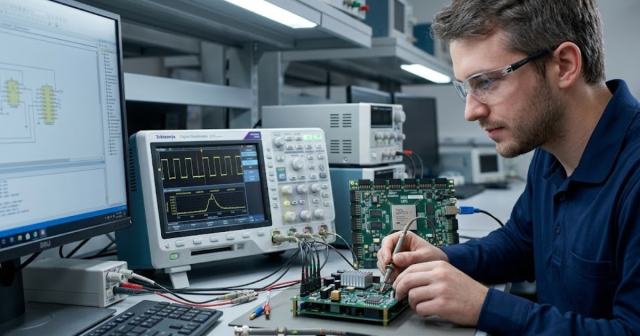

Choose embedded software testing tools that integrate with CI

Embedded software testing tools are only as fast as their integration points. The right toolchain plugs into your CI system, uses the same build artefacts you ship, and exports results in formats your dashboards and quality gates understand. Tools that require manual setup, GUI-only workflows, or ad hoc licences will add waiting even if their test execution is quick. Selection should focus on repeatability, automation hooks, and debuggable failures.

Evaluate tools against three practical questions. First, can they run headless and unattended on your build agents, including access to debuggers, flash hardware, and required permissions? Second, can they store artefacts and metadata so a failed run can be reproduced on demand, even weeks later? Third, can they isolate tests so one crash does not poison the entire run, which is a common issue in firmware testing when a single fault leaves hardware stuck?

Tradeoffs will show up quickly. Deep hardware insight tools often have higher setup effort, while lightweight frameworks can miss timing issues, interrupt ordering, and peripheral edge cases. Treat this as an engineering choice, not a procurement choice. A smaller set of tools that your team can automate and trust will beat a large set that only experts can operate.

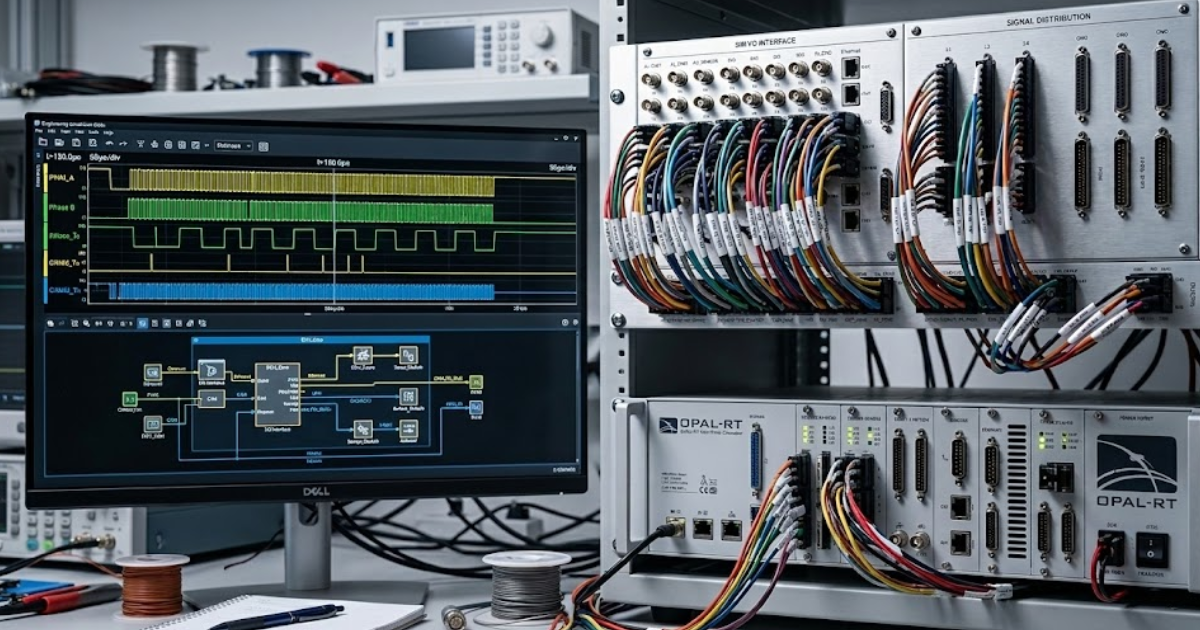

Use hardware in the loop for closed-loop validation

Hardware in the loop improves firmware validation because it tests your control code against realistic timing and I/O behaviour without waiting for the full product. A hardware-in-the-loop setup runs the controller firmware on its target hardware while a real-time simulator provides sensors, loads, and plant dynamics. That closed-loop testing catches issues that pure simulation and static checks miss, such as saturation, timing jitter, and fault handling under stress. It also makes regression testing repeatable once the rig is stable.

A concrete scenario shows why this matters. A team testing inverter control firmware can run the controller on its production microcontroller while the simulator generates phase currents, DC bus ripple, and sensor dropouts, then asserts that the firmware enters a safe mode within a strict time budget. That same run can inject a stuck-at fault on an input and verify the diagnostic path, without risking hardware damage or waiting for a full power stage build. A platform such as OPAL-RT is often used for this kind of closed-loop validation when you need deterministic timing and repeatable fault injection.

HIL is not a replacement for bench testing, and it will slow you down if it becomes a fragile science project. The rig needs stable reset paths, versioned models, and clear ownership for both firmware and simulation assets. Start with a narrow set of high-risk behaviours where the payback is obvious, then expand only after the first set of tests runs unattended. When the HIL loop is reliable, it becomes a fast gate for changes that would otherwise queue for scarce hardware.

“The goal is dependable signal, not maximum activity.”

Balance simulation fidelity, cost, and coverage across test stages

Speed comes from matching test fidelity to the risk you’re trying to retire. High-fidelity simulation and HIL provide stronger confidence, but they cost more to build, run, and maintain. Low-fidelity checks run faster and earlier, but they miss timing and physical effects. A balanced workflow assigns each stage a clear purpose so you get broad coverage without turning every change into a full-system exercise.

| Where you run the test | What it catches best | What it costs you | When it earns its keep |

| Developer workstation with isolated unit tests | Logic errors, boundary conditions, and regression in core algorithms | Low setup effort, but limited timing and I/O coverage | Every change that touches business logic or state machines |

| CI runner using component tests with stubs | Interface breaks, protocol framing issues, and error handling paths | Moderate maintenance of stubs and fixtures | When modules interact and failures must be reproducible |

| Software-in-the-loop simulation | Control stability trends, sequencing issues, and integration timing assumptions | Model upkeep and risk of model drift from hardware | Before hardware is ready and after large refactors |

| Hardware-in-the-loop closed-loop testing | Timing jitter impacts, fault responses, and I/O edge cases under load | Rig build effort and ongoing calibration work | For safety-critical paths and costly-to-debug integration failures |

| Bench testing on full hardware assemblies | Electrical behaviour, signal integrity issues, and integration with production peripherals | High lab time, higher risk of hardware wear and setup variance | Release candidates and changes that touch hardware interfaces |

Cost is not only money. It also shows up as model upkeep, lab scheduling friction, and the cognitive load of debugging. NIST estimated that better testing infrastructure could reduce the economic impact of inadequate testing by about $22.2 billion, and the practical reading for embedded teams is simple: invest in the stages that remove the most reruns and the most lab queueing. When each stage has a job and a stopping point, simulation and hardware complement each other instead of competing for attention.

Avoid common embedded system testing traps that slow teams

The most damaging traps in embedded system testing are preventable: flaky tests, unclear ownership, and hardware setups that can’t be restored quickly. Speed depends on trust, because every suspect result triggers reruns and meetings. A test suite that fails unpredictably will slow releases more than a smaller suite that is deterministic. The goal is dependable signal, not maximum activity.

Flakiness needs a firm policy. If a test fails and the team’s first instinct is to rerun it “just to see,” the feedback loop is already broken, and you’ll pay for it repeatedly. Ownership matters just as much, because a failure without a clear owner becomes a queue, and queues are where firmware testing goes to die. Hardware also needs the same hygiene as software, with documented wiring, reset control, and consistent I/O mapping so results mean the same thing every time.

Long-term speed comes from treating testing as a product with users, uptime, and support, not as a side task. When you invest in repeatable rigs, versioned models, and clean artefact capture, each fix reduces the cost of the next change. Teams that work with OPAL-RT often see this shift when HIL assets move from one-off lab work to a maintained capability with clear standards, because the rig stops being a bottleneck and starts being a dependable gate. That discipline will keep your feedback loops short even as complexity rises.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.