Modular architectures for building scalable real-time simulation platforms

Simulation

03 / 13 / 2026

Key Takeaways

- Set measurable timing, I/O, and fidelity budgets first, then treat overruns as a defined system behaviour.

- Use modular contracts and standardized interfaces to keep model, I/O, and compute changes from breaking the platform.

- Protect determinism at scale with a single time authority, explicit rate boundaries, and always-on latency and jitter observability.

A scalable real-time simulator stays deterministic as it grows.

That outcome rarely comes from adding more compute or more nodes; it comes from tighter control over boundaries, time, and responsibility. Public internet time services typically keep a client clock within about 10 ms of UTC, which is fine for logging but weak for distributed simulation timing budgets. Modular architecture is the practical way to put those controls in place without turning every scale step into a rewrite.

The most reliable scalable architecture design treats modular architecture design as a set of testable contracts, not a folder structure. Each contract locks down what runs where, what data crosses boundaries, and what “on time” means at every interface. You’ll still iterate on fidelity and performance, but the platform stays stable while models, I/O, and compute change around it.

Define requirements for a modular real time simulator platform

A modular real time simulator platform starts with requirements that are measurable at run time, not just stated in documents. You need hard limits for time step behaviour, acceptable jitter, I/O latency budgets, and what happens on overrun. Those limits will decide partitioning, hardware choices, and how strict your module contracts must be.

Start with the constraints that can’t be negotiated once you connect hardware or people start trusting results. Determinism targets come first, then I/O and fidelity, then scale targets like node count and model size. Treat integration as a first-class requirement, because every new model and every new interface adds risk unless it fits a known pattern.

- Define a fixed time step range and allowed missed deadlines.

- Set end-to-end I/O latency and jitter limits for closed loops.

- Specify fidelity targets that map to solver and discretization choices.

- List required interfaces and protocols with versioning expectations.

- Choose pass fail criteria for timing, stability, and repeatability.

These requirements should be validated early with a minimal workload that still exercises timing, I/O, and scheduling. A small, honest test often surfaces the real constraint, such as an I/O path that adds jitter or a solver setting that causes overruns. Once the limits are clear, modular boundaries stop being philosophical and start being executable.

Break the simulator into modules with clear contracts

Module boundaries should match responsibilities you can test independently, such as time stepping, model execution, I/O adaptation, and logging. Each module contract must define inputs, outputs, update rates, and ownership of state. Clear contracts stop scaling work from turning into cross-cutting edits that introduce timing drift and hard-to-reproduce bugs.

Good contracts read like a checklist: data types and units, valid ranges, timestamps, and what “late” means. They also cover lifecycle rules, including initialization, warm start, and reset behaviour, since many timing issues appear during transitions. Keep contracts versioned and treated as code, with automated checks that reject incompatible changes.

Granularity matters. A contract that wraps an entire subsystem can be too coarse, since a single change forces a large retest, while contracts at the signal level create high overhead and brittle interfaces. Aim for boundaries that let teams swap a model, a solver, or an I/O adapter without touching the scheduler, and insist that every boundary has a measurable performance budget.

“Clear contracts stop scaling work from turning into cross-cutting edits that introduce timing drift and hard-to-reproduce bugs.”

Choose time management for determinism across distributed nodes

Distributed determinism depends on one clear authority for simulation time and a defined rule for when data is considered valid. You’ll choose between conservative synchronization, optimistic approaches with rollback, or fixed-latency pipelines, but the choice must match your time step and I/O loop needs. The right time management model turns node-to-node links into predictable delays.

Fixed-step execution is usually the anchor for a real time simulator, but multi-rate designs are common once you add high-frequency power electronics, slower plant dynamics, and external interfaces. Multi-rate works when each rate boundary has explicit resampling rules and timestamps, otherwise you get hidden phase errors that look like control tuning problems. Network transport should be treated as part of the timing model, with bounded latency and explicit buffering rather than “best effort” delivery.

| Architecture checkpoint | What good looks like before you scale further |

| Time authority | One component owns time and publishes a verified clock. |

| Rate boundaries | Every cross-rate signal has a defined resampling rule. |

| Node links | Latency is bounded and modelled as part of the system. |

| Overrun policy | Late steps trigger a known response, not silent drift. |

| Repeatability | Same inputs produce the same outputs across restarts. |

Scale execution with partitioning, scheduling, and hardware acceleration

Scaling execution means placing work where it can meet deadlines, then enforcing that plan with scheduling rules you can verify. Partition the model along cut points that minimize data exchange and keep tight feedback paths local. Hardware acceleration helps when parts of the computation have predictable structure, but it only pays off if time and data contracts stay intact.

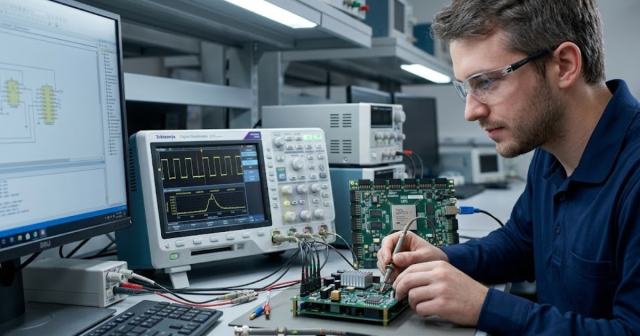

A single concrete scenario shows the tradeoffs: a lab runs a HIL setup for a grid-connected inverter controller at a 50 µs step, with switching dynamics requiring short, repeatable compute bursts and a network model that is heavier but less time sensitive. The switching portion stays on an accelerator with deterministic execution, while the network portion runs on CPUs and exchanges phasor-level data at a slower rate, with explicit timestamps and buffering. The controller I/O path gets its own latency budget and is validated under load, not just in an idle run.

Partitioning should be guided by measured step time distributions, not average compute time. Schedulers need to reserve headroom for worst-case bursts, garbage collection, and I/O interrupts. If you can’t explain why a task meets its deadline, scaling will amplify the uncertainty until the simulator becomes a timing lottery.

Standardize interfaces for models, I/O, and toolchains

Interface standards are what keep modular architecture from collapsing under integration work. Define a small set of canonical signal representations with units, timestamps, and metadata, then force all model and I/O adapters to translate through them. Standardization reduces the number of edge cases you need to test as modules multiply.

Model interfaces should separate numerical state exchange from configuration, and both should be versioned. I/O interfaces should treat wiring, scaling, calibration, and fault states as data, not manual lab steps, so the same setup can be recreated and audited. Toolchain integration works best when code generation, model exchange, and parameter management are treated as repeatable build outputs, with clear artefact ownership.

Teams that use OPAL-RT often enforce this separation by keeping real-time execution concerns isolated from host-side model preparation and test orchestration, which makes integration changes safer to review and easier to roll back. The point is not a specific product; it’s the discipline of making each interface explicit, testable, and stable under change.

Build observability for latency jitter and numerical stability

Observability is the safety net that keeps scaling work honest. You need visibility into end-to-end latency, per-step jitter, queue depths, and numerical stability markers, all time-stamped against the same clock. Without these signals, engineers will argue from symptoms, and timing bugs will masquerade as model fidelity issues.

Concurrency bugs accounted for 2% to 16% of reported bugs across several large software systems in one well-known empirical study, and those defects were disproportionately hard to reproduce. That matters because distributed simulators are concurrency machines, with scheduling, messaging, and I/O all interacting under deadline pressure.

“Observability turns “it glitched once” into a traceable chain of causes.”

Instrument at the module boundary, not only at the system level. Record step start and end times, overrun counts, dropped or late messages, and numeric indicators like solver residuals or saturation events. Keep a lightweight mode that can run all the time, then add targeted tracing that you can switch on without changing timing behaviour.

Avoid common scaling failures in real-time simulation systems

Most scaling failures come from hidden coupling, unbounded timing paths, and inconsistent interface semantics. Deadlines get missed because a “small” change altered a cross-node dependency, or because an adapter quietly added buffering that shifted phase. The fix is rarely more compute; it’s tighter contracts, better measurement, and a stricter change process.

Watch for five recurring failure modes: modules that share state outside contracts, mismatched units or timestamps at boundaries, scheduling policies that assume average load, I/O paths tested only in isolation, and reset sequences that don’t restore a known initial state. Each one grows more expensive with every new node and every added interface. Teams that treat timing budgets like financial budgets, with explicit allocations and audits, spend less time in late-stage triage.

Long-term stability comes from treating your scalable architecture design as a product that has to keep working after the original builders move on. The strongest teams keep module contracts simple, enforce them with automated checks, and refuse scale requests that bypass time discipline. OPAL-RT’s best platform deployments follow that pattern: measured constraints first, modular boundaries second, then scale as a controlled sequence of verified changes.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.