Accelerated simulation guide for grid and power systems

Power Systems, Simulation, Microgrid

01 / 13 / 2026

Key Takeaways

- Faster than real time simulation pays off when study throughput limits planning scope, since more runs expose sensitivities you can act on.

- Simulation acceleration improves planning accuracy through coverage of sequences and long duration checks, not through polishing a single base case.

- Faster than real time and real time simulation work best as a staged workflow with shared models, strict version control, and targeted lab validation.

Accelerated simulation will cut grid study turnaround only when speed stays under control. Weather-related outages were estimated to cost the U.S. economy an inflation-adjusted annual average of $18 billion to $33 billion between 2003 and 2012. Faster study cycles let you test more operating conditions before you commit settings. That reduces rework when results reach operations, protection, or planning reviews.

A staged workflow keeps results defensible and practical. Accelerated simulation gives you breadth across long time windows and large scenario sets. Real time simulation gives you timing you can trust when controls, relays, or I/O are involved. Used as a pair, you get wide screening first and a tight validation gate at the end.

Accelerated simulation as a time compression tool for grid studies

Accelerated simulation runs a grid model faster than wall-clock time. The study goal stays the same, but results arrive sooner. Solver settings, step sizes, and model detail are chosen to support that speed. You trade raw runtime for better scenario coverage in the same week.

A feeder planning team can compress a week of voltage profiles into a short session. Tap changers, capacitor switching, and slow control loops still operate on the simulated timeline. The run finishes fast enough that you can sweep settings across multiple seasons, not just a single day. That makes it easier to compare violations, losses, and equipment operations across operating points.

Time compression works only when you keep the behaviours that decide the answer. Switching detail is unnecessary for many energy metrics, yet it matters for harmonics and some protection checks. Clear pass fail criteria stop the work from drifting into speed chasing. We write those criteria before the first accelerated run. A small baseline set at normal speed will anchor your accelerated runs and expose timing drift early.

“Real time simulation adds a hard timing contract that accelerated runs do not provide.”

How accelerated simulation differs from offline and real time execution

The main difference between offline, accelerated, and real time execution is the simulation clock contract. Offline runs as fast or slow as the computer runs. Accelerated simulation targets faster-than-real-time to compress long windows. Real time simulation stays locked to wall time so sampling and interfaces stay consistent.

A protection coordination workflow shows the split in practice. Offline runs help you tune settings across many fault locations, then rerun as needed. Accelerated runs turn that sweep into a high-throughput screen across many operating points. Real time runs then validate the short list where event order and I/O timing decide a trip. This checkpoint table is a fast way to sanity-check the choice.

| Checkpoint question | What to do |

| Are you scanning thousands of operating points and contingencies? | Use accelerated simulation and validate a small baseline subset. |

| Does the outcome depend on exact relay or control sampling? | Use real time simulation with a fixed step and timing audits. |

| Do you need maximum switching detail for the answer? | Use offline simulation and accept longer run times for accuracy. |

| Are you connecting external hardware or external control software? | Use real time simulation and measure end-to-end delay and jitter. |

| Are long time constants the focus, not sub-cycle transients? | Use accelerated simulation with averaged models and consistent sampling. |

When accelerated simulation produces valid grid and power system insight

Accelerated results are valid when model assumptions still match the physical question. Numerical stability must hold at the chosen step size and solver settings. Event timing must stay inside the tolerance your study requires. Baseline comparisons on a small set of cases will confirm those conditions.

Queue screening is a strong test of validity. Interconnection studies often need thousands of runs across operating points, faults, and switching states. Interconnection queues in the United States had nearly 2,600 GW of generation and storage capacity seeking grid connection at the end of 2023. Acceleration helps only if every run follows the same run recipe, with the same initialization rules and checks.

Validity also depends on what you measure. A voltage margin check can tolerate tiny phase shifts, while a misoperation check cannot. Time-scale separation keeps results defensible: keep fast dynamics that decide the outcome, then simplify the rest deliberately. Staged validation lets you scale acceleration with confidence instead of guesswork.

Model fidelity and numerical limits that constrain acceleration ratios

Acceleration ratio is capped by stiffness and discrete events. Power electronics, saturating magnetics, and tight algebraic loops force small solver steps. Fixed-sample controls also limit how far you can speed up without altering behaviour. The model will not outrun its tightest time constant.

A converter-heavy feeder makes the trade-off concrete. A detailed electromagnetic transient model with switching devices will require microsecond-level steps, so speed gains will be modest. An averaged converter model will speed up long runs, but it will hide switching ripple and some harmonic effects. That choice works for long-duration voltage recovery, and it fails for filter tuning or resonance checks.

Numerical limits show up as unstable integration, solver overruns, or subtle timing drift. Tightening tolerances will restore stability but will slow the run. Coarsening steps will speed things up but can reorder events around faults and breaker operations. Writing down the smallest time scale you must preserve keeps acceleration realistic and repeatable.

How real time simulation complements accelerated studies in grid workflows

Real time simulation adds a hard timing contract that accelerated runs do not provide. The simulation clock stays aligned with wall time, so sampling and I/O timing stay consistent. That makes it the right tool for closed-loop testing of protection and controls. Accelerated simulation then filters thousands of cases into a shortlist worth timing-locked validation.

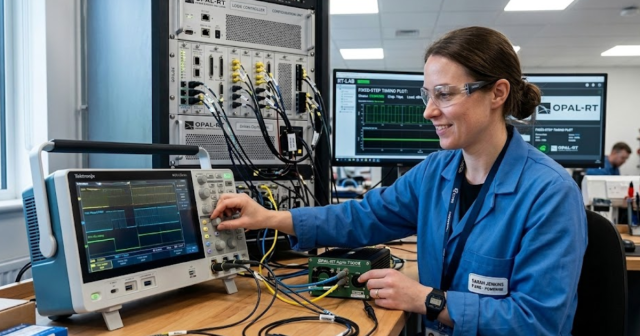

A staged workflow is easy to apply. Accelerated screening finds the operating points where voltage margins shrink, oscillations grow, or frequency recovery slows. Those cases then run in real time with the same control stack you plan to deploy, plus any relay or controller hardware that must be exercised. OPAL-RT fits naturally here as the real time execution platform when deterministic timing and repeatability are gating requirements. The goal is to prove interface behaviour, not to run every case in real time.

The handoff succeeds only when models stay consistent. Initial conditions, controller sample rates, and input scaling must match across both modes. Real time constraints can force model simplifications to meet a fixed step budget, so you must document what was modified. Timing-locked runs make interface assumptions explicit, which is exactly what protects grid studies from nasty surprises.

Typical grid study errors caused by misuse of accelerated simulation

Misuse happens when speed becomes the goal and verification gets skipped. Timing drift, missing fast dynamics, and mismatched sampling will quietly change outcomes. Overconfident speed factors can hide numerical instability until an edge case appears. These issues look like modelling noise, but they are usually workflow mistakes.

Relay misoperation is a common trap. The accelerated run looks stable, but the relay logic is seeing samples at the wrong spacing because the time base was altered without re-checking sampling. Another failure shows up when long-term voltage stability is accelerated with an over-simplified load recovery model, so the system appears stable even though the baseline run collapses. Fixing these mistakes later burns time because you end up debugging results that were never trustworthy.

Five checks catch most problems early:

- Record the speed factor, step size, and solver settings for every run.

- Compare a small set of accelerated cases against a trusted baseline.

- Keep discrete controls aligned to the same sample periods in simulation time.

- Audit event timestamps around faults, switching, and protection actions.

- Run sensitivity checks so small step changes do not flip the outcome.

Clear checks also help teams work faster. Controls engineers trust results more when the run recipe is explicit. Protection engineers waste less time when timestamps are consistent. Managers accept shorter cycles when the validation gate is documented.

“Discipline is the difference between speed and noise.”

Choosing accelerated or real time simulation based on study objectives

Start with the outcome you must defend, then pick the timing mode that matches the risk. Accelerated simulation is best for coverage across many cases or long time windows. Real time simulation is best when interfaces, sampling, and deterministic timing decide the result. A staged workflow keeps you fast without losing trust.

A practical rule is to screen wide, then test deep. Acceleration identifies the few cases that stress margins, expose control interactions, or create protection edge cases. Real time then validates those cases with the timing contract and interfaces you will live with in a test setup. That sequence avoids hardware-heavy effort on cases that never mattered.

Discipline is the difference between speed and noise. Baseline comparisons and time-scale checks keep acceleration honest, while timing-locked tests keep interface assumptions honest. OPAL-RT supports this approach when you need a deterministic real time platform to validate the cases that survived accelerated screening. You will move faster and keep us confident in the results.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.