Why EV development programs depend on real-time simulators for inverter and motor control testing

Simulation

03 / 01 / 2026

Key Takeaways

- Real-time, closed-loop simulation lets you validate motor control timing and stability before high-power hardware is on the line.

- Hardware-in-the-loop testing makes inverter protection and fault behaviour measurable, repeatable, and safe to stress across edge cases.

- Clear requirements for model fidelity and latency keep results credible and support a clean progression from SIL to HIL to dynamometer work.

EV programs live or die on control software quality, because a traction inverter has to react correctly to microsecond-scale electrical events while the vehicle asks for smooth torque. When electric cars reached about 18% of global new car sales in 2023, the tolerance for late test surprises got even smaller. Your teams still need aggressive schedules, but safety and warranty exposure don’t care about schedules. That’s why you need test methods that surface problems early, repeatably, and without burning hardware.

Real-time simulation and hardware-in-the-loop testing sit in the middle ground between pure software tests and high-power dyno work. They give you closed-loop behaviour, deterministic timing, and controlled fault injection, which are exactly the conditions that bench setups struggle to reproduce. The practical payoff is simple: you can validate an EV motor controller against realistic electrical dynamics before you commit to expensive prototypes and long test slots.

“Real-time simulators let you prove motor control and inverter logic before power hardware is at risk.”

Real-time simulation closes the loop for EV motor control

Real-time simulation runs a motor, inverter, and vehicle model at the same pace as your controller. Your ev motor controller sees sensor feedback and plant dynamics with a fixed time step. That makes control tuning meaningful because timing is part of the physics. It also makes tests repeatable across builds and teams.

With ev motors and controllers, the hard problems are rarely “does the algorithm work on paper.” The hard problems show up when sampling, PWM updates, and estimator timing interact with motor back-emf and current ripple. If your controller expects feedback every 100 microseconds, a simulator that slips or buffers data will lead you to tune around a timing artefact. Real time removes that ambiguity, so your PI gains, observers, and torque limits are tuned against behaviour you can trust.

Closed-loop testing matters even more when you’re validating transitions: torque to regen, speed reversal, launch control, and traction events. Those transitions are where limiters, integrators, and sensor filtering can fight each other. When the plant response is computed deterministically, you can isolate what’s wrong: control logic, signal conditioning, or timing. That clarity is the main reason real-time simulation keeps showing up early in EV powertrain programs.

What risks bench testing misses in traction inverter development

Bench testing is useful for bring-up, but it hides the coupled behaviour that breaks traction inverters. A static load or basic motor spin rig can’t reproduce the fast torque and speed changes your control stack will face. That gap lets unstable tuning, protection timing issues, and measurement corner cases slip through. Fixing those issues late costs schedule and hardware.

Open-loop bench work also pushes you toward “safe” conditions that avoid damage, which means you spend less time testing the conditions that actually matter. Even a good dyno test cell has constraints: availability, safety procedures, and limits on repeating the same transients all day. Rework is the silent budget killer here, and software rework is a big part of it. Software defects have been estimated to cost the US economy $59.5 billion per year in lost productivity and rework.

| Test method | What you can learn quickly | What still stays uncertain |

| Open-loop power bench | Basic gating, sensing, and power stage bring-up becomes straightforward. | Closed-loop stability and protection timing under transient loads remain unclear. |

| Software-only simulation | Control logic and stateflow sequencing can be checked safely. | Timing, I/O behaviour, and target hardware limits are not exercised. |

| Real-time HIL | Control timing, sensor paths, and protection logic can be validated repeatedly. | Thermal behaviour and parasitics still need power hardware correlation. |

| Dynamometer testing | Torque, efficiency, and thermal limits can be measured under load. | Fault injection breadth and repeatability are limited by risk and time. |

| Vehicle testing | Integration and drivability can be assessed in complete operating conditions. | Root cause isolation becomes harder and test repetition is costly. |

For EV inverter testing, the pattern is consistent: bench work confirms the hardware is alive, then closed-loop validation must happen under controlled transients and faults. Real-time simulation fills that gap with repeatable plant behaviour, so you can move into dyno and vehicle testing with fewer unknowns.

Hardware-in-the-loop tests expose inverter fault and protection behaviour

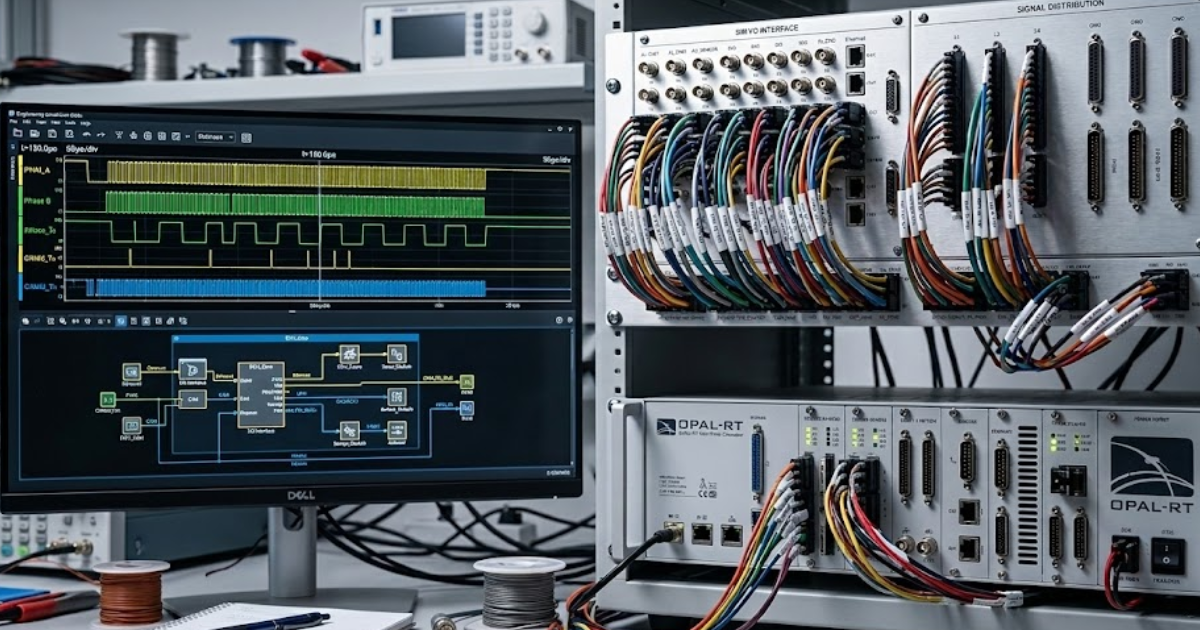

Hardware-in-the-loop connects your real controller hardware to a simulated powertrain that runs in real time. The key benefit is safe fault injection while the controller still “believes” it’s driving a motor and inverter. That lets you validate protection behaviour, not just steady-state control. It also tightens your confidence in edge-case timing.

“HIL also supports the conversations that matter between controls, power electronics, and functional safety teams.”

EV traction inverter testing often fails on protection details: detection thresholds, blanking times, shutdown sequencing, and recovery rules. A practical case is validating a controller’s response to a simulated phase current sensor bias that ramps over a few milliseconds while the simulated motor load steps from cruise torque to regen. You can watch how quickly the controller derates, how it flags diagnostics, and whether the protection path trips cleanly without oscillation or unsafe gating. That’s hard to do safely with a physical motor and a high-energy DC bus.

HIL also supports the conversations that matter between controls, power electronics, and functional safety teams. You can agree on measurable pass and fail criteria such as trip timing, torque limits, and reset conditions. Once those criteria are captured, regression becomes routine, so a firmware update that fixes drivability won’t silently break a protection feature.

Voltage testing targets switching, timing, and sensor edge cases

Voltage testing for motor control and drive systems is about more than “does it survive the bus voltage.” You need confidence that measurement, timing, and protection paths behave correctly during fast transients. Real-time simulation helps because you can apply controlled waveforms and disturbances repeatedly. That makes failures diagnosable instead of mysterious.

Switching edges stress your voltage and current measurement chains through dv/dt coupling, common-mode shifts, and sampling jitter. If your ADC sampling aligns poorly with PWM edges, you’ll see phantom noise that looks like torque ripple or overcurrent. Isolation monitoring also lives in this space, since it must ignore switching noise but still detect a real loss of isolation. Requirements are often numeric and unforgiving, such as the 100 Ω/V minimum isolation resistance for DC circuits referenced in UN Regulation No. 100.

Real-time testing gives you a way to separate sensing problems from control problems. You can vary signal conditioning, sampling offsets, and filter constants while holding the plant constant. That matters when you’re trying to prove that a fault is a sensor chain limitation rather than an inverter hardware issue, because the corrective actions and owners are completely different.

Model fidelity and latency set credible motor controller results

Results only carry weight when the simulator model and timing match what the controller expects. Fidelity determines whether the simulated motor and inverter dynamics are close enough for tuning and validation. Latency and jitter determine whether your control loop is being tested honestly. If timing is wrong, you’ll fix problems that don’t exist and miss ones that do.

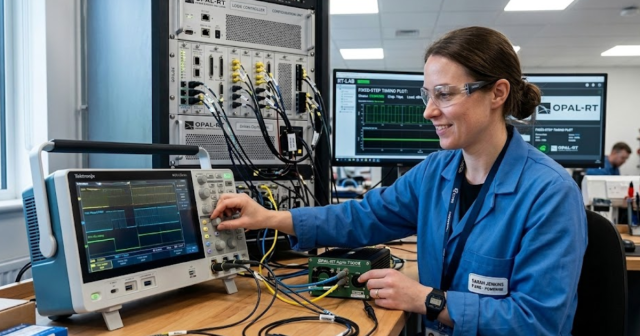

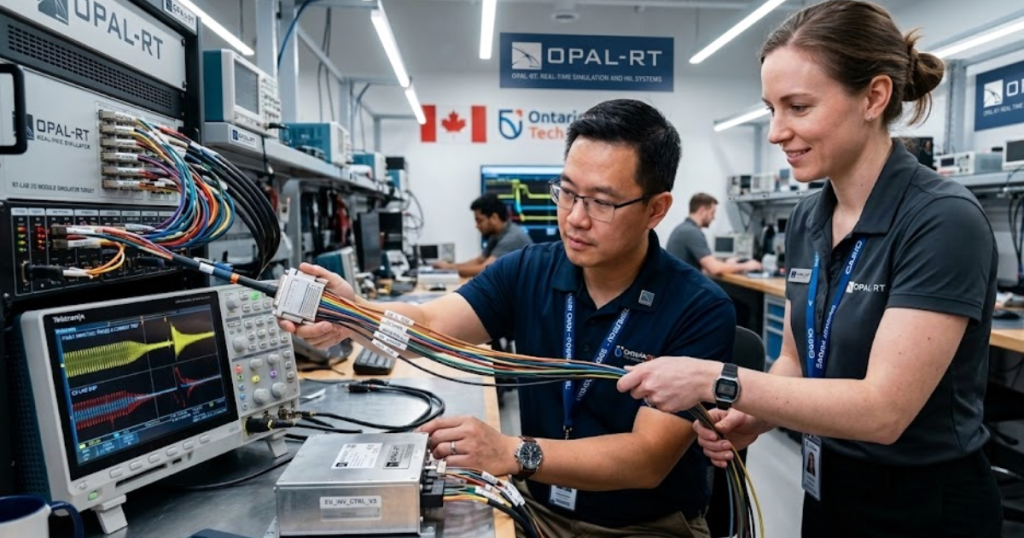

You don’t need a perfect physics model to get value, but you do need the right detail in the right place. Average-value inverter models work for high-level control, while switching-level models matter when you’re validating sampling, deadtime effects, and protection timing. The simulator also has to treat I/O deterministically, since encoder signals, resolver interfaces, and current sensor paths all have timing assumptions baked into firmware. Teams often standardize these checks on platforms such as OPAL-RT when they want deterministic execution plus flexible I/O integration without rebuilding the lab each time.

- Your simulation step size matches the controller sample time.

- Total I/O latency stays bounded and is measured, not assumed.

- Sensor models include offset, noise, and saturation limits.

- Inverter modelling matches the questions you’re trying to answer.

- Fault injection timing is deterministic and repeatable across runs.

When you treat fidelity and latency as first-class requirements, test results become portable. Controls engineers can trust that a tuning change will behave similarly on the dyno, and power electronics engineers can trust that a protection change won’t be masked by simulator artefacts.

How teams scale from SIL to HIL to dynamometer

Software-only tests are best for algorithm logic and fast iteration. HIL is the step that proves timing, I/O behaviour, and fault responses while risk stays low. Dyno testing is where you validate power, thermal limits, and efficiency under load. A disciplined sequence keeps each test stage focused on what it can prove.

Scaling works when you treat each stage as a filter, not as a replacement for the next one. SIL should lock down control intent and edge conditions in a repeatable test suite. HIL should lock down the embedded reality: task scheduling, sensor paths, protection sequencing, and regression coverage after every firmware change. Dyno time should be protected for questions only hardware can answer, such as thermal derating behaviour, acoustic noise, and efficiency mapping.

The strongest programs make one judgement call early and stick to it: they refuse to use high-power testing to discover basic control and protection mistakes. That choice reduces risk, cuts rework loops, and makes ev inverter testing more predictable for everyone booking lab time. OPAL-RT fits cleanly into that workflow when you need a real-time simulator that can run closed-loop plant models with deterministic I/O, so your team can arrive at the dyno with fewer unknowns and clearer pass and fail criteria.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.