When to add FPGA acceleration to an existing real time simulator

Simulation

03 / 04 / 2026

Key Takeaways

- Add FPGA acceleration only after timing data shows a deterministic bottleneck that blocks your required time step, end-to-end latency, or jitter limits.

- Target FPGA work at the smallest high-rate slice you cannot schedule reliably on CPUs, especially tight I/O servicing and repeatable low-latency feedback paths.

- Control cost and risk with a fixed interface contract, a CPU reference for verification, and acceptance tests that prove both numeric match and stable closed-loop timing.

Add FPGA acceleration when your real-time simulator cannot hit deterministic deadlines at the fidelity you need.

When timing slips, you stop testing control quality and start testing scheduling luck. That gap shows up as unstable closed-loop behaviour, false failures, and workarounds like decimation that hide the very problems HIL is meant to expose. Software defects cost the U.S. economy an estimated $59.5 billion each year, which is a reminder that late discovery is expensive even before you add hardware damage risk and lab downtime.

“The practical stance is simple: FPGA work is justified when it removes a specific, measured bottleneck that blocks required time step, latency, or I/O determinism.”

If you cannot name the bottleneck and prove it with timing data, you’re not ready to upgrade. If you can, an FPGA is often the most direct path to stable, repeatable HIL results without downgrading the model.

Signs your CPU-based HIL model is missing deadlines

CPU-based HIL is out of runway when the simulator misses its fixed time step, shows timing jitter that bleeds into I/O, or forces model simplifications that change control outcomes. You’ll see overruns, sporadic solver slowdowns, and I/O timestamps that stop lining up with the intended sample clock. Those symptoms mean determinism is already compromised.

Start with measurement, not gut feel. Your first check is the overrun counter and the worst-case execution time for the model plus I/O servicing. If the worst case gets close to the step size, the lab will feel “fine” until a rare scheduling spike knocks the loop off balance. That’s also when engineers start adding buffer delays, lowering sample rates, or turning off parts of the plant, and those fixes quietly change what you are validating.

Also watch for problems that only show up under closed-loop stress. A model that runs without hardware connected can still fail once interrupts, device drivers, and high-rate I/O joins the loop. When you need deterministic response, the question is not average CPU load. The question is worst-case latency and jitter at the exact points where the controller exchanges data with the plant.

Workloads that benefit most from FPGA acceleration in simulation

FPGA acceleration pays off when the bottleneck is parallel, time-critical work that a CPU cannot schedule deterministically at your target rate. That includes tight I/O servicing, sub-cycle timestamping, and math that can be executed as parallel pipelines. If the work repeats every step and must finish on time, an FPGA is a strong fit.

Single-thread CPU performance improved only 2.9% per year from 2011 to 2018, so waiting for “next year’s processor” will not rescue an overloaded fixed-step model. That matters for HIL because your toughest cases are not just heavy compute, they’re heavy compute that must complete inside a hard deadline every cycle, with the same latency each time.

A concrete case is an EV traction inverter HIL bench where you need to ingest multiple PWM signals, compute switching-related plant behaviour at a few microseconds, and return current and voltage feedback fast enough to keep a high-bandwidth controller stable. A CPU can solve much of the plant, but the PWM capture, deadtime handling, and ultra-low-latency feedback path often become the rate limiter. FPGA logic can take over that deterministic slice while the CPU keeps the slower dynamics and supervisory logic intact.

Latency, I/O, and jitter targets that justify FPGA

FPGA work is justified when your latency budget is tighter than what a CPU and its I/O stack can guarantee, even if average performance looks fine. The clearest trigger is when you can’t meet the required fixed time step with margin, or when jitter changes the effective sample time seen by the controller. That turns repeatable tests into inconsistent ones.

Set targets using the control loop you are validating, not a generic “fast is good” goal. If a controller expects feedback every 50 microseconds, consistent end-to-end latency matters as much as raw step size. Jitter that pushes a small fraction of cycles late can be worse than a slightly slower but stable loop, because it injects timing noise into the control algorithm and can trip protections.

| What you measure during runs | What it usually means | What to change first |

|---|---|---|

| Worst-case execution time sits near the fixed time step | Hard deadlines will be missed under stress conditions | Profile the model, then offload the deterministic hot path |

| Overruns happen in bursts, not continuously | Scheduling and I/O servicing jitter is dominating behaviour | Move time-critical I/O handling into FPGA logic |

| I/O shows variable latency relative to the simulation clock | The controller is seeing an inconsistent sample time | Define an end-to-end latency budget and enforce it in hardware |

| Model fidelity must be reduced to keep real time | Validation scope is being silently narrowed | Partition fast switching or high-rate blocks onto FPGA |

| Adding channels breaks timing even when compute seems low | I/O bandwidth and interrupt load are the limiting factors | Use parallel I/O paths and deterministic scheduling on FPGA |

| Results vary run to run with the same inputs | Non-determinism is leaking into the closed loop | Lock timing sources, then reduce jitter with hardware execution |

How to estimate effort, cost, and risk before upgrading

“The win is stable HIL behaviour you can trust run after run.”

Effort and cost depend less on FPGA hardware and more on how cleanly you can isolate what must be deterministic. A good estimate starts with a timing budget, a clear partition boundary, and a verification plan that proves the FPGA path matches the CPU model where it should. Risk climbs when requirements are vague or when the FPGA scope keeps expanding.

Collect a small set of facts before you commit engineering time. These inputs keep you honest about what you are fixing and what you are simply moving around.

- Your required fixed time step and the minimum stable latency budget

- The measured worst-case execution time and overrun frequency under load

- The I/O count, update rates, and timestamp accuracy you must hold

- The subset of model equations that must run every cycle without jitter

- The validation method that will catch numerical and timing mismatches

Budget for verification and maintenance, not just development. FPGA math often uses fixed-point or constrained precision, and the biggest cost surprises come from chasing small numeric differences that only show up in corner cases. A controlled scope, clear acceptance tests, and a rollback plan will keep the upgrade from turning into an open-ended rewrite.

Practical migration path from CPU solver to FPGA kernels

The most reliable upgrade path keeps your CPU model intact and moves only the timing-critical slice onto FPGA kernels. That approach protects your existing plant and test assets while giving you deterministic I/O and low-latency computation where it matters. Treat the FPGA as a co-processor with a strict contract, not as a replacement for your full solver.

Start with a partition that has a clean boundary: inputs arrive, a bounded set of computations run, outputs return, all within a known time budget. Freeze interfaces early, including scaling, units, and update rates, then build a bit-accurate test harness that compares FPGA outputs against the CPU reference across representative stimuli. Once the kernel matches, tighten timing, then re-run closed-loop tests to confirm stability improvements come from determinism, not from altered dynamics.

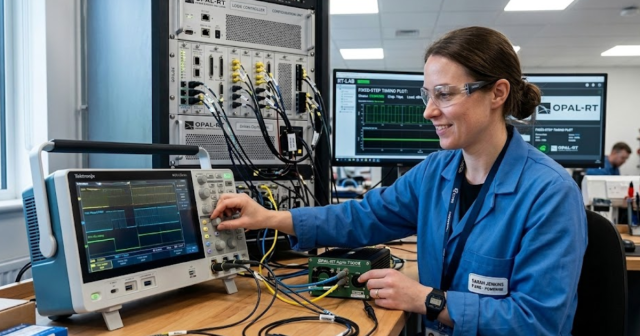

Execution is easier when your toolchain supports mixed CPU and FPGA workflows with consistent timing instrumentation. Teams using OPAL-RT platforms often keep the main model on the CPU and map specific high-rate I/O and compute kernels onto FPGA resources so the lab can hit deadlines without stripping fidelity out of the plant.

Common pitfalls when adding FPGA to an existing simulator

The most common failure mode is treating FPGA acceleration as a speed upgrade instead of a determinism upgrade. Moving large chunks of a model onto FPGA without a tight timing contract creates new integration work, new numeric behaviours, and new debug friction. Success comes from isolating the bottleneck you measured and solving only that.

Scope creep is the quiet killer. A small offload meant to fix I/O jitter can turn into a full reimplementation once teams start chasing performance “headroom” without checking if the controller actually needs it. Another frequent trap is skipping end-to-end timing validation, which leaves you with fast kernels but inconsistent loop latency once signals cross between CPU and FPGA domains.

Good engineering judgment looks boring here. Define the deadline, measure the miss, offload the smallest deterministic slice that removes the miss, then lock it down with repeatable tests and documented scaling. That discipline is also what we push for at OPAL-RT when teams ask about FPGA acceleration, because the win is not raw speed. The win is stable HIL behaviour you can trust run after run.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.