Managing high-frequency switching in real-time EMT simulation

Industry applications, Simulation

03 / 31 / 2026

Key Takeaways

- High-frequency switching pushes architecture choice ahead of solver preference, so fast converter sections belong on FPGA once timing error starts altering behaviour.

- CPU resources stay valuable for large electrical structures and slower subsystems, especially when partitioning and multi-rate execution are handled with care.

- Hybrid execution works best when you map each subsystem to the hardware that matches its timing burden, I/O needs, and validation goal.

Managing high-frequency switching in real-time EMT simulation comes down to a hard engineering choice: the fastest converter behaviour belongs on FPGA hardware, while the rest of the system belongs on CPU only when its time-step budget stays comfortable. That choice matters more now because renewable capacity additions grew 50% in 2023 to almost 510 GW, which means more converter-based equipment is moving into grids, drives, storage systems, and test benches.

You can still run large EMT models in real time, but only after you stop treating every subsystem as if it needs the same numerical treatment. Fast switching, dense converter counts, and closed-loop controller testing punish generic model structures. The Harvest case shows that clearly on pages 2 through 5, where high switch counts, fine timing needs, and mixed CPU and FPGA execution are all treated as practical limits rather than abstract modelling details.

Real-time simulation performance depends on computation architecture choices

Real-time EMT performance is set by architecture first and model detail second. You will miss deadlines when the solver, time step, and hardware target do not match the switching behaviour you are trying to reproduce.

You should treat architecture selection as a timing exercise, not a software preference. A CPU is excellent when event density stays moderate and partitioning remains clean. Once switch events stack across many submodules and dead-time handling matters, the execution platform becomes the main modelling parameter. Teams that ignore that usually spend weeks tuning a solver that was never a fit for the workload.

CPU-based simulation fits large system models with moderate switching dynamics

CPU-based EMT works best when you need breadth more than switching granularity. Large electrical networks, transformers, passive components, and converter sections with slower switching usually fit well on CPU solvers.

In a case study, the rectifier side did not require high-frequency switching, so a time step in the tens of microseconds was enough. The CPU solver still mattered because it decoupled more than twenty converters from a multi-winding transformer and kept the model inside real-time limits without adding another FPGA.

That is the right way to use CPU resources. You assign them to the parts of the model that are numerically large but not event-dense. You also gain flexibility for broader network studies, fault setup, and plant expansion. Trouble starts when you ask the CPU to reproduce every gate transition, dead-time interval, and phase-shifted switching event in a converter stack that clearly wants much finer resolution.

FPGA-based simulation supports nanosecond time steps and high switching frequency models

FPGA execution is the practical answer when switching detail is the model, not a side effect. It gives you deterministic timing and very small time steps that CPUs will not hold under real-time deadlines.

Page 4 of the file states that the FPGA-based power electronics toolbox supports switching frequencies above 200 kHz and time steps as small as the nanosecond level. That matters for fast converter legs, detailed PWM behaviour, and device interactions that disappear when you stretch the step size. A cascaded H-bridge inverter or a high-speed SiC converter is a good example, because edge timing shapes the electrical result.

Recent lab work also reflects the same pressure from hardware. One NREL study notes that most high-power converters operate with switching frequency of 10 kHz to 50 kHz, which already places strong pressure on real-time solver timing before you add multi-level phase shifts or fault logic.

“Managing high-frequency switching in real-time EMT simulation comes down to a hard engineering choice: the fastest converter behaviour belongs on FPGA hardware, while the rest of the system belongs on CPU only when its time-step budget stays comfortable.”

Power electronics switching behaviour often determines when FPGA becomes necessary

Switching behaviour, not model size alone, tells you when FPGA becomes necessary. The main trigger is dense, repeated switching events whose timing error will alter control response, losses, harmonics, or protection logic.

For a threshold example, each single full-bridge submodule switched at 500 Hz to 1000 Hz, yet phase shifting across multiple levels and PWM dead-time pushed the effective switching frequency per phase up to 10 kHz. That is exactly the kind of situation where a model can look modest on paper but become difficult in execution.

You should look for three warning signs. First, small gate-timing errors start to alter waveform shape. Second, multiple converter cells switch in a staggered pattern that multiplies event rate. Third, protection and control functions depend on reproducing those events without jitter. Once those signs appear, FPGA is no longer an optimisation choice. It becomes the only reliable way to keep timing honest.

Model partitioning strategies that combine CPU and FPGA execution effectively

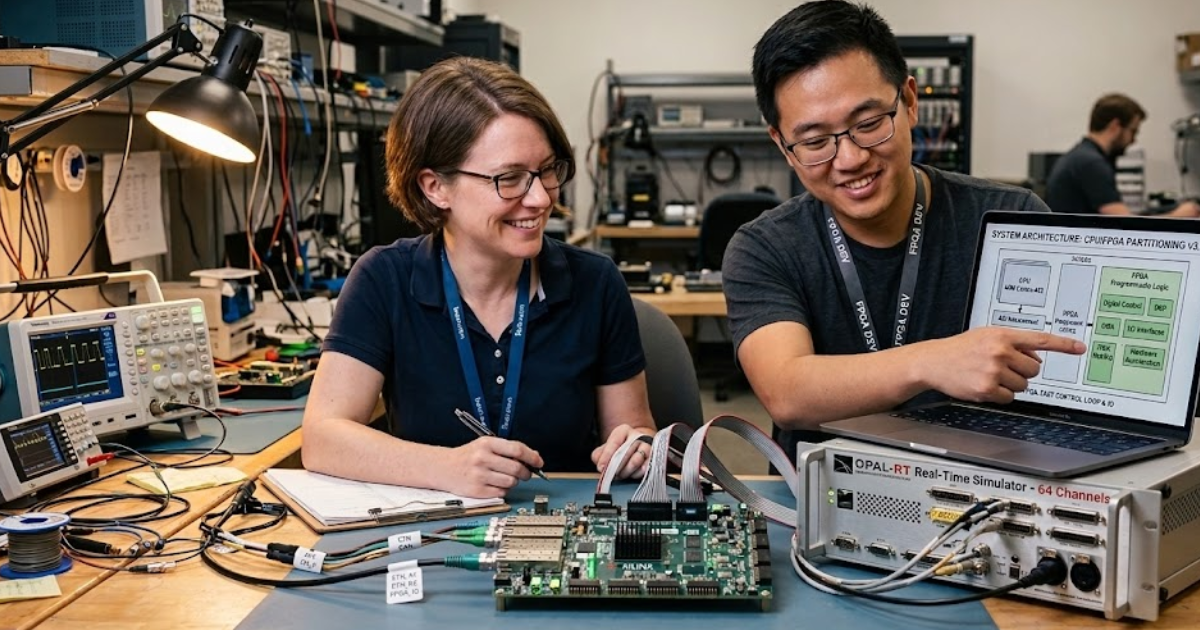

The best real-time EMT systems do not ask one processor type to do everything. They split the model so each processor handles the physics it can solve accurately within its own time budget.

A medium-voltage drive is a clear example. The inverter side, with its dense switching events and tight dead-time requirements, belongs on FPGA. The rectifier, transformer coupling, and slower network dynamics can stay on CPU when their numerical needs sit in the microsecond range. That is exactly the split shown across the study, where FPGA handles the converter-heavy section and CPU handles the larger but slower electrical structure.

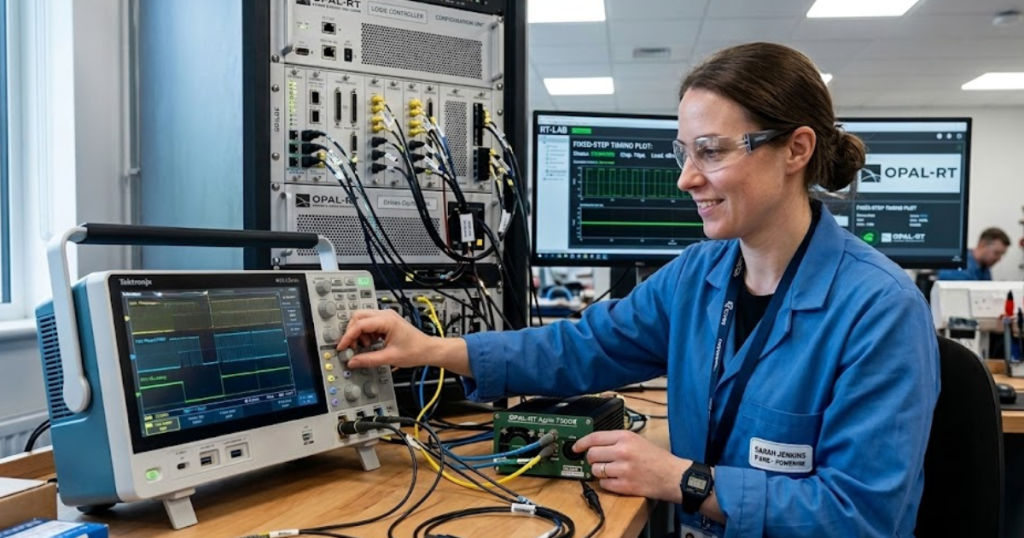

A platform such as OPAL-RT matters here because the workflow is not just about raw speed. You need clean I/O handling, synchronized execution, and a practical way to expand the model when the converter count rises. Bad partitioning creates more interface pain than solver relief, so the boundary between CPU and FPGA should follow switching behaviour, not organisational habit.

| Situation you are modelling | Execution choice that keeps real time credible | Main reason that choice holds up |

| A large network with lines, transformers, and moderate converter activity | Keep most of the plant on CPU with a coarser fixed step | The switching detail is limited enough that the CPU can spend its budget on network scale and coupling |

| A converter leg with dense PWM events and tight dead-time sensitivity | Move that converter section to FPGA execution | The timing resolution must stay deterministic at a much smaller step than a CPU usually sustains |

| A multi-level drive with staggered switching across many cells | Split the fast inverter cells to FPGA and keep slower support blocks on CPU | Event density rises faster than model size, so mixed execution avoids wasting fine resolution on slow subsystems |

| A controller test bench with encoder and motor feedback in closed loop | Keep the fast plant interface on FPGA and place slower plant sections on CPU | Closed-loop timing errors show up quickly when sensor and switching signals are delayed or jittered |

| A cost-limited lab system that still needs broad plant coverage | Reserve FPGA resources for only the sections that truly need them | Selective placement keeps fidelity where it matters and avoids paying for fine timing across the whole model |

Cost, scalability, and integration constraints that shape architecture decisions

Architecture choices are technical, but budget and lab constraints still shape them. The goal is not to put everything on FPGA. The goal is to spend fine timing only where it changes the test result.

The hardware details on page 3 show how this plays out in practice. The simulator described there supports 3-phase 8- to 13-cascaded full-bridge topologies directly, while higher-level topologies use optical fibre communication for switching signals and measurements. That means scalability is possible, but only if your I/O plan and synchronization scheme are designed from the start.

You should also count integration cost as part of model cost. Extra FPGA capacity, signal conditioning, encoder interfaces, and fibre links are worth it when they remove prototype risk or shorten control validation. They are not worth it for every rectifier, filter, or feeder model. Good architecture choices keep hardware expansion tied to timing need, not to a vague wish for maximum fidelity everywhere.

Common modelling mistakes that cause CPU simulations to miss real-time deadlines

CPU real-time EMT models usually fail for a small set of repeat mistakes. Most of them come from forcing uniform detail across subsystems that have very different timing needs.

A practical review of the Harvest case points to five mistakes that show up often:

- Assigning the same fine time step to both fast converter legs and slow network sections

- Keeping heavily coupled transformer and converter blocks in one unpartitioned CPU task

- Modelling every switching event on CPU after phase shifting multiplies the event count

- Treating sensor and motor feedback as secondary even when the test is closed loop

- Expanding converter cell count without revisiting I/O timing and synchronization limits

A team building a cascaded drive model will hit those limits early. Page 5 shows why multi-rate execution and decoupling matter, while page 2 shows how quickly switch count and coupling rise. Missed deadlines are usually not a sign that EMT is impossible. They are a sign that the model architecture stayed generic after the switching problem stopped being generic.

“Bad partitioning creates more interface pain than solver relief, so the boundary between CPU and FPGA should follow switching behaviour, not organisational habit.”

Hybrid CPU and FPGA platforms provide scalable performance for complex HIL testing

Hybrid platforms give you the most useful answer for complex HIL work because they match computation method to electrical behaviour. That is why disciplined partitioning keeps winning over all-CPU ambition and all-FPGA excess.

The strongest proof is operational, not theoretical. Pages 5 and 6 show a setup where digital motor and sensor models replaced risky physical testing for control verification, fault checks, multi-motor testing, and issue reproduction. Those gains came from putting the fast switching work where it belonged, keeping the slower sections cost-aware, and treating timing limits as a design input from day one.

That is the standard you should hold for your own test bench. Clean hybrid execution keeps you from overbuilding the platform and from under-modelling the converter. OPAL-RT fits naturally into that closing judgment because the useful part is not the hardware alone. The useful part is a workflow that lets you place fast EMT switching on FPGA, keep broader plant sections on CPU, and get repeatable HIL results without guessing.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.