Scaling from academic HIL setups to industrial validation platforms

Simulation

03 / 23 / 2026

Key Takeaways

- Scale HIL credibility first through deterministic timing and verified I/O integrity, since compute upgrades alone won’t make results trustworthy.

- Grow capability in controlled steps that keep models and workflows stable, using modular compute, modular I/O, and clear acceptance checks after each change.

- Treat industrial validation as an evidence system with automated runs, version control, and traceability from requirement to test record.

You can scale a university HIL lab into industrial validation without starting over.

A common failure pattern is spending money on compute first, then discovering the missing piece was deterministic I/O, signal conditioning, or test automation. A NIST study estimated inadequate software testing cost the U.S. economy $59.5 billion per year. That same cost logic shows up in HIL when late test discoveries force redesigns, retesting, and schedule resets.

“The shift comes from treating your setup less like a demo bench and more like test infrastructure, with tight timing control, repeatable execution, and accountable results.”

The practical takeaway is simple: industrial-grade HIL is less about “more horsepower” and more about disciplined timing, interfaces, and evidence. You’ll get better outcomes when you define what “credible” means for your device under test, then scale in layers that protect your existing models and lab skills. You still can start with an entry level FPGA simulator, as long as the path to tighter determinism and larger I/O stays open. That mindset turns an academic HIL system into a platform industry teams can trust.

What an entry-level FPGA real-time simulator provides

An entry level FPGA real time simulator gives you deterministic timing and hardware-linked I/O without the complexity of a full validation rack. It runs parts of a model on an FPGA so the step time stays consistent under load. It also provides low-latency I/O updates, which keeps control loops stable. You should treat it as a timing reference point, not just a faster computer.

Look for three baseline capabilities. First, the simulator should support fixed-step execution with clear limits you can verify, including how it behaves under peak CPU and I/O activity. Second, it should offer direct access to common analogue and digital I/O types, with known update rates and latency. Third, it should support a workflow that lets students and researchers keep using familiar modelling tools while you enforce real-time constraints during execution.

The tradeoff at entry level is that you’ll usually get enough determinism for control development, but not enough I/O density, signal conditioning, or fault coverage for serious validation. That’s fine early on if you treat the platform as a building block. The mistake is assuming the first working closed loop is also a valid verification setup, since timing, scaling, and traceability demands jump sharply once external partners rely on your results.

Map academic HIL system gaps in timing fidelity and I/O

A gap analysis should start with timing fidelity and I/O integrity, since both determine if your simulated plant and the device under test stay in lockstep. Timing fidelity covers step size, jitter, and latency from computation to pin-level updates. I/O integrity covers ranges, resolution, isolation, grounding, and how signals behave when faults are injected. Industrial testing expects those characteristics to be measurable and stable, not “good enough most days.”

Build your gap map around what your test needs to prove, then work backward to platform capabilities. Control stability testing pushes you toward tighter, repeatable loop timing and deterministic I/O scheduling. Power electronics pushes you toward higher-rate switching behaviour and carefully managed analogue paths, including anti-alias filtering and clean reference voltages. Networked controllers push you toward protocol timing accuracy, timestamping, and realistic bus loading, since subtle scheduling errors can hide until integration.

| Checkpoint you can measure | What a typical university lab has | What industrial validation expects |

| Step time stability under peak load | Works in light configurations, degrades with model growth | Fixed-step execution with bounded jitter you can report |

| I/O latency from compute to pin update | Acceptable for demos, rarely characterized | Documented latency with consistent timing across channels |

| Signal integrity on analogue paths | Basic DAQ wiring, limited isolation | Isolation, filtering, and grounding practices matched to DUT |

| Fault injection behaviour | Manual toggles and ad hoc scripts | Repeatable, logged faults with controlled timing and reset |

| Test repeatability across sessions | Dependent on who runs the lab and what changed | Versioned models, parameter sets, and automated run records |

This mapping step prevents a common misread: “timing issues” often show up as control instability, but the root cause can be I/O scaling errors, noisy references, or unsynchronised clocks. Once you can name the gap in measurable terms, you can buy or build only what closes it. That also keeps you from over-investing in compute when the limit is electrical interface quality.

Choose upgrade steps that scale without breaking existing workflows

Scaling works best when you preserve what’s already producing value, then add capabilities in a sequence that reduces retesting. Start with timing determinism and I/O quality, then move to automation and traceability. Keep model interfaces stable so labs don’t rewrite every semester. Treat each upgrade as a controlled change with acceptance checks, not a one-time refit.

Use a small set of upgrade steps as a repeatable playbook, and tie each step to a measurable outcome you can validate in a day. This keeps the lab usable for teaching while raising the bar for partner-facing validation. It also makes budgeting easier, since each step has a clear “done” definition and an obvious next step if you need more capacity. The list below works well because it avoids buying hardware you can’t yet justify with test evidence.

- Characterize your current loop timing, jitter, and I/O latency with a repeatable test.

- Fix analogue and digital I/O quality first using proper ranges, isolation, and grounding.

- Add deterministic FPGA execution for the parts of the model that set timing limits.

- Standardize model packaging and parameters so runs are repeatable across users.

- Automate run control and logging so every result has a clear provenance trail.

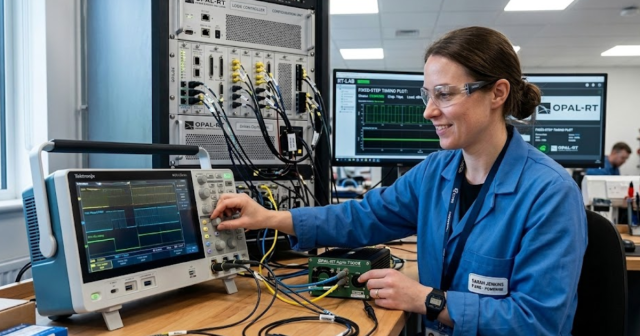

One practical execution pattern is to keep your existing models and lab scripts, then place them under stricter run control as you add deterministic compute and better I/O. Platforms from OPAL-RT are often used this way in university to industry transition labs because they support modular growth while staying centred on real-time execution rather than custom glue code. That said, the platform only pays off when your lab adopts the discipline to measure timing, lock configurations, and keep results reproducible.

“The most useful mindset shift is to value boring consistency over clever one-offs.”

Design a modular platform for scalable real-time simulation growth

A modular design lets you scale compute and I/O independently, which is what “scalable real time simulation” should mean in practice. Compute scale covers model size, solver load, and partitioning across processors or FPGA resources. I/O scale covers channel count, signal types, and physical interface needs as devices mature. Modularity keeps you from rebuilding the lab each time a project adds one more converter, sensor set, or bus.

Start with a clear separation between three layers: compute, interface, and orchestration. Compute should handle real-time execution with predictable performance and clear timing limits. Interface should handle the messy physical layer details, including isolation, signal conditioning, and protocol interfaces, without forcing model rewrites. Orchestration should handle run control, parameterization, reset behaviour, and logging, so tests behave the same across users and time.

Tradeoffs show up in synchronization and integration work. Multi-node simulation, distributed I/O, and network timing all introduce clock alignment issues that a small lab can ignore until results start drifting across sessions. Budget time for system-level timing checks, not just model correctness. A modular platform succeeds when you can add capacity while preserving the same test intent and the same evidence trail.

Meet industrial validation needs with repeatable tests and traceability

Industrial validation requires you to prove not only that a test ran, but that it ran the same way every time and that the result links back to a requirement. Repeatability comes from controlled configuration, deterministic execution, and automated logging. Traceability comes from tying model versions, parameter sets, firmware versions, and test IDs to each run. Without that chain, your strongest technical result will still be hard to trust externally.

Test discipline matters because human memory and ad hoc lab notes do not scale. A Nature survey found 70% of researchers have tried and failed to reproduce another scientist’s experiments. That same reproducibility problem shows up in HIL when a controller update, a parameter tweak, or a timing change quietly alters outcomes. Strong validation practices treat every run as a record, not an event.

A concrete workflow illustrates the jump from academic proof-of-concept to industrial readiness: your lab validates an electric drive inverter controller against an overcurrent requirement, running a scripted sequence that sweeps torque commands, injects a short fault at a specific simulation time, and logs both I/O waveforms and controller state. The test becomes industrial-grade when the run automatically captures the exact model build, FPGA bitstream version, parameter file hash, firmware version, and pass fail criteria, then produces the same result on another bench with the same configuration. That level of control turns a HIL setup into a defensible validation asset, even when staff rotate and projects change.

Avoid common scaling mistakes when moving from lab to production

Most scaling failures come from treating industrial testing as “more of the same” rather than a different standard of evidence. The top mistakes are buying compute before fixing I/O integrity, relying on manual test execution, and letting timing drift go unmeasured. Another frequent miss is mixing teaching setups with partner validation without a clear separation of configurations. Fixing these issues later costs time twice, once in rework and again in lost confidence.

Guardrails help more than heroic debugging. Lock a small set of reference tests that must pass after any change, and require timing and I/O checks as part of that gate. Keep a clean boundary between experimental models and validated models, even if both live in the same lab. Plan for maintainability, since a lab that only one person can run will stall the moment schedules tighten.

The most useful mindset shift is to value boring consistency over clever one-offs. When your team can rerun the same test next month and get the same outcome, partners will trust the results and students will learn the right habits. OPAL-RT can support that kind of disciplined execution, but the deciding factor will always be how your lab defines acceptance criteria, controls change, and treats timing and I/O as measurable engineering artefacts. That’s what turns scaling into a durable capability instead of a cycle of rebuilds.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.