Key Takeaways

- Modular PHIL benches reduce rework because interface changes stay inside defined hardware and software boundaries.

- Scale works best when you add compute, I/O, and power capacity along prepared module boundaries instead of replacing the whole bench.

- Modularity pays off only when partitioning, timing, and toolchain openness are handled with the same discipline as hardware selection.

Modular power hardware-in-the-loop systems outperform fixed test setups because they let you expand test scope without tearing down the bench.

Test scope rarely stays still. Electric car sales are set to pass 17 million in 2024, which puts more inverter, charger, battery, and grid interaction variants into validation plans than a fixed bench can absorb comfortably. You need a setup that can stretch without forcing a rebuild each time the programme adds a new interface or operating point.

Fixed PHIL benches lose efficiency when requirements shift

Fixed benches work well only when interfaces, power levels, and controller I/O stay stable. Once a programme adds a second inverter, a different sensor chain, or a new fault case, each small change ripples across wiring, rack space, amplifier sizing, and timing checks. That is where efficiency starts to slip.

A bench built for a single traction inverter at 400 V often starts with fixed cabling, fixed analogue I/O count, and a single protection scheme. Six months later, the team adds an 800 V variant and a second control mode. You’re recertifying interconnects, remapping channels, and retuning protection to keep the loop stable. The original setup still runs, but each new request forces work in parts of the bench that should have stayed untouched.

That rework is why fixed setups look neat in a frozen test plan and awkward in active development. Every hardwired dependency turns into a schedule issue. Your staff time shifts from testing devices and controllers to preserving the bench itself. A setup that was supposed to speed validation starts slowing it down.

“That rework is why fixed setups look neat in a frozen test plan and awkward in active development.”

Modular architectures isolate interface changes from bench rebuilds

Modular architecture contains change inside defined hardware and software boundaries. When power stages, I/O cards, measurement paths, or communication interfaces sit in separate modules, you can replace one functional block without disturbing the rest of the closed loop. That separation keeps bench updates local and manageable.

A charger team offers a simple illustration. The first phase uses a controller linked through one fieldbus and a limited sensor set. The next phase swaps in a newer controller with different timing and extra measurements. A modular bench lets you replace the interface module and update signal mapping while the solver partition, amplifier path, and protection logic stay intact. You won’t have to retest every connection in the rack just to adopt a new controller.

This matters because PHIL stability depends on clean boundaries. If you know which module owns power conversion, which owns measurement, and which owns controller exchange, you can trace the effect of a change quickly. You’ll spend less time guessing where latency, scaling errors, or protection mismatches came from.

Scale PHIL benches through module boundaries, not replacements

The most effective way to scale a PHIL bench is to add capacity along module boundaries instead of retiring the whole setup. Extra compute, extra I/O, and extra amplifier channels attach to a known partition, so timing behaviour stays visible as the bench grows. Scale works best when growth follows a structure you already trust.

A microgrid bench shows the difference clearly. The first version might model two distributed energy resources and a feeder. Later work adds storage, another converter, and more protection logic. A modular layout lets you add compute and interface capacity beside the existing partitions, which means the original bench keeps its role while the new scope slots into prepared boundaries. You’ll preserve validated pieces instead of replacing a healthy setup with a larger but less familiar one.

| Bench change | Fixed setup effect | Modular setup effect |

|---|---|---|

| Adding a second converter channel | Core rack wiring and protection need broad rework. | A matched power module extends capacity with limited retest. |

| Moving to a new controller protocol | Signal paths across the bench often need remapping. | An interface module absorbs the protocol swap cleanly. |

| Raising voltage or current range | Amplifier limits can force partial bench replacement. | Power capacity grows in the section that needs it. |

| Adding more fault scenarios | Protection logic changes can touch unrelated subsystems. | Fault handling stays inside the relevant module boundary. |

| Sharing the bench across teams | Reconfiguration time eats into available test hours. | Reusable partitions shorten turnover between test campaigns. |

Scale should feel additive, not disruptive. Once growth forces a full bench replacement, you lose calibration history, operator familiarity, and confidence in comparison results. Module boundaries keep those assets usable.

Reusable interface modules cut setup time across test phases

Reusable interface modules shorten setup time because the bench keeps the same tested building blocks from early control work to later power validation. Once sensor scaling, protection logic, and I/O maps are packaged as reusable modules, you stop rebuilding routine pieces for each phase. That continuity removes a large share of avoidable lab work.

A motor drive programme often begins with controller verification at modest power, then shifts to higher power and tougher transients. If the measurement module, trip logic, and controller I/O map were built as reusable blocks, the team carries those pieces forward instead of recreating them. The bench setup becomes a controlled extension of prior work rather than a fresh integration task each time scope expands.

You’ll feel the gain in handoff quality as much as in speed. Engineers joining mid-programme can trust the known modules and focus on the new device under test. Repeatability improves because the routine bench pieces keep the same behaviour across phases. That makes comparison data easier to defend when questions show up late.

Open toolchain support keeps modular benches useful longer

Open toolchain support matters because modular hardware only stays useful if it accepts new models, controller code, and data flows without custom rewrites. A bench tied to a narrow software path becomes fixed in practice, even if the hardware looks modular on paper. Flexibility in the rack means little if the workflow is boxed in.

Consider a lab that starts with control validation, then adds plant variants from a partner, then folds in automated test scripts. If model import, scripting, and I/O mapping all rely on a single locked path, each new addition turns into translation work. A more open setup lets your team keep the bench stable while model sources, automation layers, and controller interfaces shift around it. That is where modularity stays useful instead of decorative.

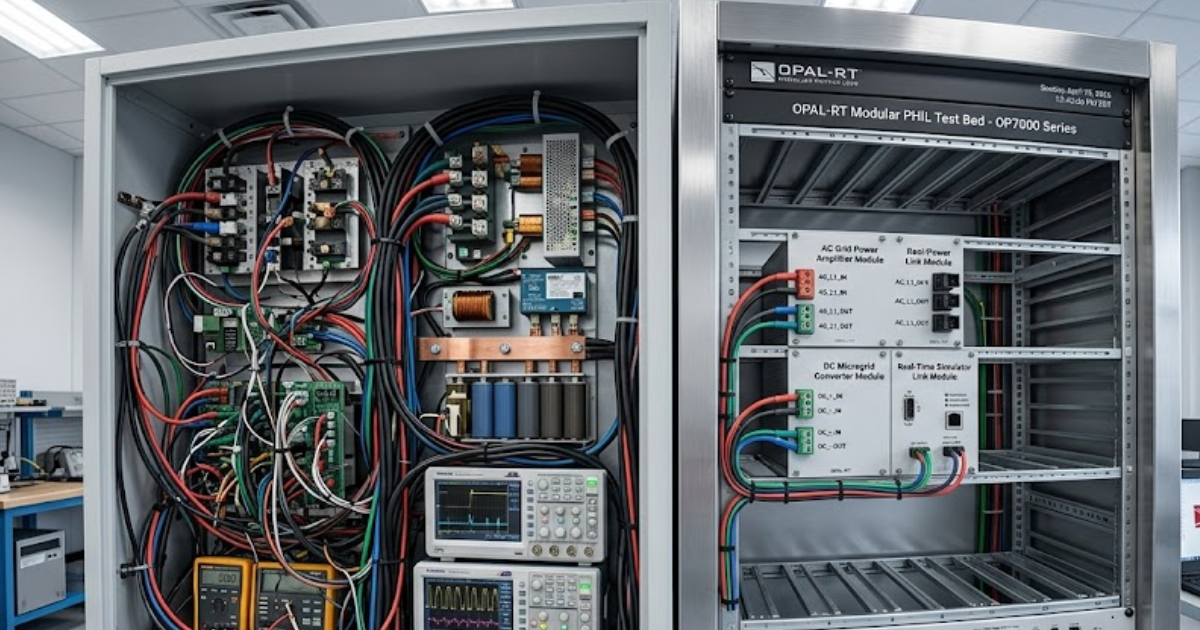

Teams working on OPAL-RT platforms often keep execution, I/O mapping, and model partitioning separate, which makes interface updates easier to handle without disturbing the whole bench. That approach matters because modular hardware isn’t enough on its own. The bench will stay useful only if software boundaries are as clean as the physical ones.

Fixed setups look cheaper until expansion costs appear

Fixed setups look cheaper at purchase time because they include only the channels and power range you need on day 1. Total cost rises later when new use cases force recabling, spare part duplication, downtime, and partial bench replacement that a modular plan would have absorbed with smaller updates. Initial price rarely captures that labour.

Searches around modular versus non-modular power supply choices usually focus on cable management and desk-level neatness. A computer power supply modular versus non-modular choice is small compared with a PHIL bench, where the same logic reaches into amplifiers, sensing, protection, and compute nodes. Once those layers are tied tightly to a fixed design, each upgrade carries validation effort that won’t show up in the original quote.

Procurement teams sometimes miss this because expansion cost arrives later and lands in several budgets. Bench downtime hits test schedules, requalification hits engineering time, and new interface hardware hits capital spending. You don’t feel the full bill on day 1, but you will feel it when scope expands and the bench resists the next step.

Poor partitioning turns modular PHIL into a latency problem

Modular PHIL only works when the partition between modules respects timing and signal ownership. If the bench splits fast control paths across too many boundaries, latency rises, synchronization gets messy, and stability margins shrink. A modular layout with weak partitioning can become harder to trust than a simpler fixed bench.

A converter test bench can run well with separate compute and interface modules until a fast feedback path gets scattered across multiple links. Current feedback crosses one boundary, protection crosses another, and a controller handshake crosses a third. The architecture still looks tidy on paper, but loop timing becomes harder to reason about. You can’t recover good PHIL behaviour with modularity alone if the partition was wrong from the start.

- Fast feedback paths cross too many module boundaries.

- Protection logic sits far from the power interface.

- Signal ownership is unclear during fault testing.

- Time synchronization depends on manual fixes.

- Adding one interface shifts latency across the bench.

Good modular design puts the fastest interactions close to each other and moves slower functions outward. That is a design discipline, not a hardware feature. Once you treat partitioning as a timing problem first, modular PHIL keeps its benefits without creating new instability.

“A bench built to accept that motion stays useful far longer than a bench built around a frozen assumption.”

Modular PHIL fits programs with uncertain test scope

Modular PHIL fits uncertain programmes because uncertainty rarely comes from poor planning. Requirements mature, control code shifts, partner hardware arrives late, and safety teams ask for new edge cases. A bench built to accept that motion stays useful far longer than a bench built around a frozen assumption. That is the practical reason modular systems outperform fixed ones.

A lab supporting grid and storage work sees this often. New renewable power capacity reached almost 510 GW in 2023, up nearly 50% from 2022. That pace adds converter variants, control updates, and grid interaction tests faster than any single frozen bench plan can cover. The teams that stay productive are the ones that treat expansion as normal bench use, not as a failure of planning.

That is why disciplined labs keep choosing modular benches for uncertain programmes. The goal isn’t abstract flexibility. It’s preserving calibration, staff time, and trust in test results while scope keeps moving. OPAL-RT fits naturally in that kind of setup because modular execution matters only when the bench remains coherent under pressure.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.