Accelerating R&D cycles using hardware in the loop testing

Power Systems

04 / 24 / 2026

Key Takeaways

- Hardware in the loop testing cuts R&D time when it removes integration faults before scarce prototype hardware reaches the lab.

- The fastest HIL programs focus first on high-risk control paths, then raise model fidelity only where pass or fail behaviour depends on it.

- Cycle time gains last only when timing accuracy, repeatable test design, and escaped defect tracking stay tightly managed.

Hardware in the loop testing cuts development time when teams use it to remove integration risk before full prototypes exist.

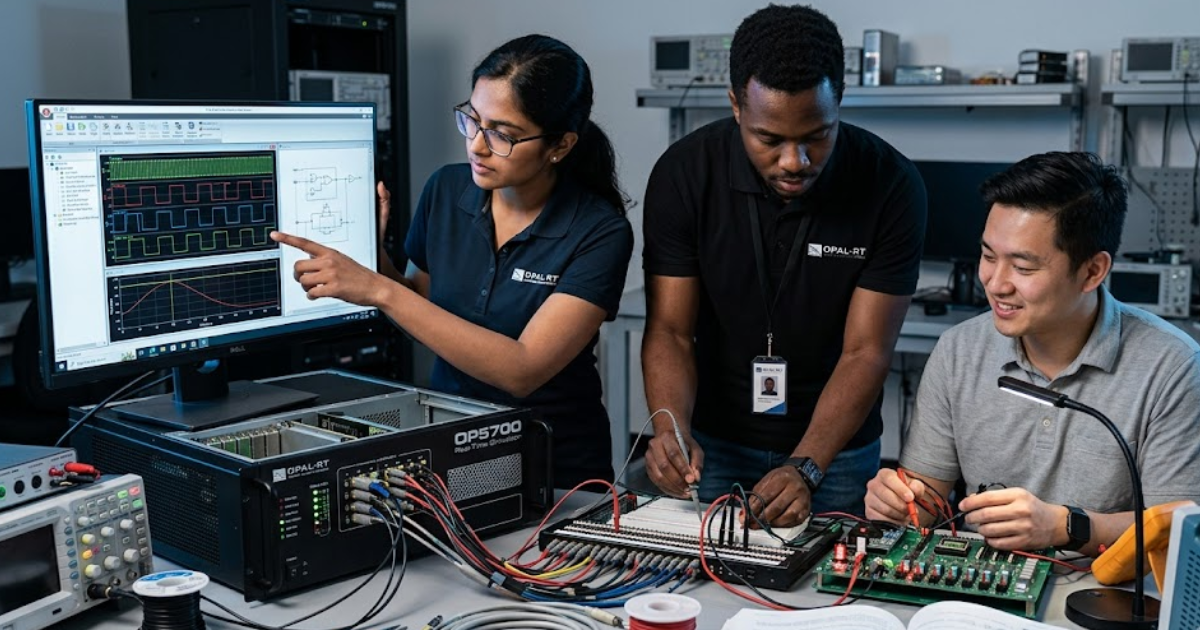

U.S. businesses spent $697.7 billion on research and experimental development in 2022, so every week lost to late validation now carries a visible cost. Hardware in the loop HIL testing speeds product development because it moves failure discovery into a controlled lab setup where software, controls, and I/O behaviour can be checked before a full system build. That shift matters most when prototype parts are scarce, safety limits restrict bench work, or several teams need the same hardware at once. Teams that treat HIL as a targeted risk reduction method will cut more time than teams that treat it as a broad simulator.

“That parallel work matters more than simulation purity.”

Hardware in the loop testing links control hardware to plant models

Hardware in the loop testing connects the actual controller, relay, or ECU to a simulated plant that runs fast enough to exchange signals at operating speed. You are not testing a loose concept. You’re testing the control hardware against electrical, mechanical, or thermal behaviour before the full machine is available.

A motor inverter team can plug its control board into a HIL rig and run thousands of load steps, voltage sags, and sensor dropouts before a dyno slot opens. The same approach works for a flight controller that must react to actuator lag or a grid relay that must trip on a fault. Each run uses the same hardware logic that will later sit on the bench.

That is why hardware-in-the-loop testing speeds development. Faults appear while design options are still cheap, and software can be patched the same week. You won’t wait for a full prototype just to learn that a scaling factor, interrupt, or fault threshold was wrong.

That setup shortens development when physical builds are scarce

Physical prototypes slow teams because each build locks money, lab time, and specialist attention into one configuration. A hardware-in-the-loop test removes that queue. You can validate control logic, I/O mapping, and fault handling while mechanical parts, power stages, or vehicle builds are still moving through design and procurement.

An aerospace team working on a flight computer doesn’t need a complete iron bird to verify sensor substitution, actuator commands, and degraded mode logic. An energy storage team can exercise battery management code against charge and discharge events before high voltage packs are cleared for lab use. Those early runs give software and controls staff useful feedback long before the full assembly is ready.

That parallel work matters more than simulation purity. Each early HIL pass gives firmware, controls, and test staff a shared reference, so rework lands sooner and meetings shorten. You’re reducing wait states between groups, which is often where schedule loss hides.

Feedback from HIL finds interface faults before bench integration

Interface faults are the defects HIL finds fastest, and they’re often the ones that stall bench integration. Timing mismatches, bad units, wrong pin polarity, and stale bus messages usually look minor in code review. Once hardware is wired, those small errors can consume days of probing and retesting.

A brake controller might expect wheel speed in one scaling format while the simulator sends another. A power converter board might read a fault line as active low when the plant model is toggling active high. CAN message order can also break state transitions even when every packet is present, which makes bench debugging feel random when the issue is actually repeatable.

HIL exposes these edges because the loop is closed and repeatable. You can pause on the exact cycle where behaviour turns wrong, adjust the interface, and rerun the same sequence. Bench work rarely gives you that control, especially when several devices are competing for time on the same rig.

Early HIL scope should follow system risk

Early HIL scope should start with the control paths most likely to delay integration or damage hardware. You don’t need full system coverage on day 1. You need the loops where timing, protection logic, or custom I/O will create long debug cycles if they fail late.

A focused first campaign usually covers a small set of checks that remove the biggest blockers:

- Control loops tied to unstable plant behaviour

- I/O conversions with custom scaling or polarity

- State transitions linked to protective actions

- Network exchanges with strict timing budgets

- Fault responses that bench staff can’t stage safely

A team building an electric drive will often start with torque control, overcurrent response, and encoder loss before it models cabin loads or driver displays. That sequence works because schedule risk rarely sits in the comfortable parts of the design. The first HIL plan should mirror what can stop a lab program.

Useful speed gains depend on model fidelity

Speed gains from HIL depend on using the right model fidelity for the question in front of you. A simplified plant will help when you’re checking state flow or message handling. A detailed switching model becomes important when controller timing, protection thresholds, or power quality behaviour are under test.

Electric cars reached about 18% of global car sales in 2023. That rise has pushed more teams into power electronics, battery management, and motor control work where fidelity matters. An inverter control board can look stable against an ideal voltage source and fail once dead time, sensor noise, and bus ripple are represented.

Good hardware in the loop testing doesn’t chase maximum detail everywhere. It adds detail at the failure boundary. If a result will alter calibration, safety margins, or release timing, the model must reproduce the behaviour that decides pass or fail.

| Model choice | What it tells you about cycle time |

| Ideal source with a simple load | This setup clears message flow and basic control logic quickly, but it will miss switching behaviour that often delays power stage bring-up. |

| Switching model with dead time | This level catches controller interactions that bench teams usually find later during first energization. |

| Sensor noise with quantization | This addition shows if filtering and thresholds are robust enough to avoid false trips during early calibration. |

| Communication delay with packet loss | This case reveals timing margins before several devices share the same network on a crowded bench. |

| Thermal limits with saturation effects | This layer matters when protection logic and derating behaviour shape release readiness. |

Timing accuracy determines if HIL results stay actionable

Timing accuracy decides if HIL results can guide release work or only serve as rough rehearsal. If the simulator, I/O chain, and controller don’t stay within the loop timing your product expects, pass and fail results will mislead you. Speed comes from trusted timing, not from running more cases.

A protection relay that must trip within a tight window or a motor controller running microsecond PWM updates needs consistent exchange across every cycle. Teams using OPAL-RT in this phase usually verify latency, jitter, and I/O alignment before they trust fault insertion or closed-loop stability runs. That discipline sets a clean baseline for every test that follows.

That habit prevents a common trap. Engineers blame firmware for oscillation that was actually caused by delayed feedback, or they accept a clean response that only exists because the simulated plant was late. You can’t shorten validation if the timing layer is adding noise to every answer.

Poor test design can erase cycle time gains

Poor test design will erase HIL speed gains even when the simulator is accurate. Manual setup steps, vague pass criteria, and oversized test suites create their own queue. The goal is a hardware-in-the-loop test that runs the smallest useful set of repeatable checks every time code, calibration, or I/O mapping changes.

A controls team might spend half a day loading files, naming channels, and resetting states before each run. Another team might execute 400 cases with no ranking, then wait for a person to read plots one by one. Those habits move the bottleneck from the bench to the test process.

Useful campaigns treat automation as lab hygiene. Start states are fixed, stimuli are versioned, and pass or fail limits are written before the run begins. You’re looking for fast signal on the few cases that catch regressions, then deeper campaigns when a change touches higher risk logic.

“You’re not buying time with a tool alone. You’re earning it each time the next prototype arrives with fewer unknowns and fewer reasons to stop the lab.”

Defect escape reduction shows if HIL is paying off

HIL pays off when escaped defects drop and retest loops shrink across the program. A shorter cycle matters, but the stronger sign is that first bench integration becomes calmer and release reviews stop circling the same unresolved faults. Good HIL work turns surprise debugging into scheduled verification.

A team that tracks escaped interface bugs, rerun counts, and time to fix will see the difference quickly. If overcurrent trips, bus timeouts, or sensor range faults are already closed before bench week, technicians spend their time confirming behaviour instead of chasing wiring myths. OPAL-RT fits this stage when teams need repeatable execution tied to those lab metrics, not just another simulation screen.

The useful judgment is simple. Hardware in the loop testing speeds product development only when scope, fidelity, timing, and test design stay disciplined. You’re not buying time with a tool alone. You’re earning it each time the next prototype arrives with fewer unknowns and fewer reasons to stop the lab.

EXata CPS has been specifically designed for real-time performance to allow studies of cyberattacks on power systems through the Communication Network layer of any size and connecting to any number of equipment for HIL and PHIL simulations. This is a discrete event simulation toolkit that considers all the inherent physics-based properties that will affect how the network (either wired or wireless) behaves.